In this week’s edition of The Byte, Carol Zhu argues that voice AI’s biggest limitation isn’t speech generation quality, it’s input understanding. Using recent benchmarks and systems research, they show how the dominant ASR→LLM→TTS “cascade” architecture strips away the paralinguistic cues humans rely on (tone, hesitation, sarcasm, uncertainty), while today's end-to-end audio models often appear to reconstruct text-like representations internally and have not yet demonstrated robust use of the acoustic signal in public results.

The piece makes the case for a missing middle layer: audio-grounded intent, a representation built to capture communicative state and pragmatic meaning, not just words, and outlines what it would take to train and evaluate it.

Spike Jonze released Her in 2013. Thirteen years later, the most advanced voice AI systems on the planet still do not reliably demonstrate the ability to distinguish between "that sounds great" said with relief, and "that sounds great" said through clenched teeth. Samantha felt surreal because she not only heard but listened and adjusted her model of Theodore in real time, across hundreds of hours, without him ever having to explain himself.

The voice AI industry has made extraordinary progress on everything it knows how to measure. However, the thing that matters most doesn't have a metric yet.

The Architecture That Won (And What It Can't Do)

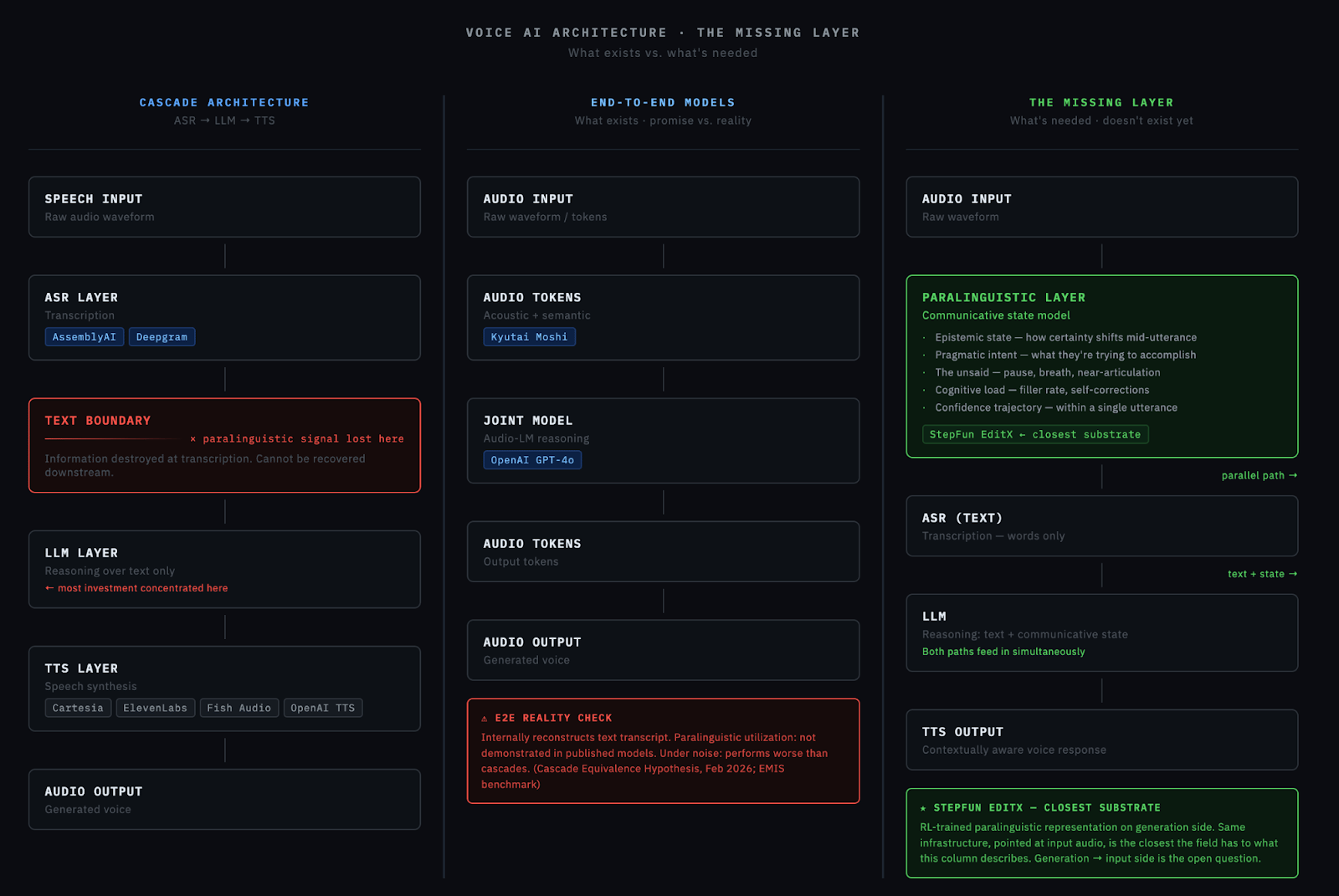

The dominant voice AI architecture is the cascade: ASR → LLM → TTS. Speech comes in, gets transcribed, gets reasoned about, gets spoken back. Modular. Debuggable. Each component is improvable independently. Each component swappable independently, and utterance-level model routing becomes tractable when the text boundary gives you a clean intervention point. This architecture won for good reasons. But it has a structural flaw that no amount of component improvement can fix: it discards or flattens much of the paralinguistic information at the ASR -> text boundary.

When a customer says “that sounds great,” the cascade passes along a short string of text. What it loses: whether those words carried genuine enthusiasm or resigned acceptance. Whether the speaker’s voice tightened slightly, suggesting doubt they haven’t articulated. It reasons over an impoverished representation of what was actually communicated.

The End-to-End Promise

The alternative is end-to-end models that process audio tokens directly and promises to preserve this information. OpenAI’s voice mode and Kyutai’s Moshi are public examples of this direction. But end-to-end models introduce their own problems. Reasoning quality degrades in audio-token-space versus text-token-space. Debuggability disappears. Safety and alignment become harder without an intermediate text layer to inspect.

A February 2026 paper, “The Cascade Equivalence Hypothesis,” tested four speech LLMs (Qwen2-Audio, Ultravox, Phi-4-MM, Gemini) and found that the E2E promise hasn’t been delivered. The problem is two-layered: cascades structurally discard paralinguistic signals at transcription; the information is gone before reasoning begins. But public results do not show that the current E2E models have solved this. In many cases, behavior is consistent with the model relying heavily on text-like internal representations while making limited use of retained acoustic information. Under noise, they perform worse than cascades, not just equivalently. Architectural access to paralinguistic signals may exist, but effective utilization has not yet been clearly demonstrated in public evaluations. What’s happening inside proprietary systems at OpenAI, Google DeepMind, or other major labs is unknown.

The Token Problem: We Don’t Have the Right Categories

The field divides speech tokens into “acoustic tokens” (optimized for waveform reconstruction) and “semantic tokens” (optimized for linguistic meaning). A comprehensive late-2025 review revealed that many so-called “semantic tokens” appear to be more phonetic than semantic; capturing sound patterns more readily than communicative meaning. We don’t have the right vocabulary to describe what “understanding” audio means at the token level.

Recent benchmarks quantify how badly current models fail at paralinguistic understanding. LISTEN (October 2025) found that when text and audio emotion cues conflict — neutral words in an angry tone — model accuracy collapses to 37–43%, near random. For purely paralinguistic scenarios with no lexical cues: 12.5%, barely above chance. EMIS confirmed: when speech models are explicitly prompted to ignore word meaning and focus on acoustic emotion, they still predict based on word-level labels over 80% of the time. These results suggest that current models still struggle to separate what was said from how it was said.

The most practically advanced work is narrow: AssemblyAI’s Universal-Streaming model trains on a special end-of-turn token, replacing crude silence-threshold approaches. It’s real progress. But knowing when someone stopped talking is orders of magnitude easier than knowing what they meant while talking.

The TTS Herd and the Problem Nobody Is Solving

Look at where the investment went in 2024 and 2025. ElevenLabs v3, Fish Speech S2, StepFun EditX, Cartesia Sonic-3: a cluster of major TTS releases compressed into months of each other.

The incentive structure is legible. TTS produces a demo. You play a clip, it sounds human, and the investor writes a check. Quality benchmarks are clean, and the finding that a standard LLaMA backbone plus rigorous RL training beat exotic architectures made the playbook copyable. VC incentives compound the effect: TTS revenue is demonstrable, while ‘improved paralinguistic understanding’ is a harder sell.

But this is not only herd behavior. The input side is robust streaming ASR, noise handling, and full semantic understanding is genuinely harder. Foundational models like Whisper were designed for batch transcription, and bending them toward real-time use requires non-trivial engineering. Getting a robust streaming ASR that works across production acoustic diversity is unsolved at scale.

The semantic understanding layer above transcription is harder still. TTS has a natural training signal: does it sound right? ASR understanding has no clean equivalent. You are either proxying through emotion labels — with a noisy ceiling — or through behavior prediction. The labeling bottleneck is a philosophical obstacle, not just an engineering one.

What the Voice AI Companies Have Learned

To understand where the real knowledge lives, we need to look at how the leading voice AI companies have approached speech and be honest about what their architectures actually are.

AssemblyAI

Latest: Universal-3 Pro (Feb 2026) introduces LLM-style prompt control over transcription output. While their Universal-Streaming model advanced semantic endpointing, their observable product emphasis remains transcription infrastructure and turn/endpoint quality more than higher-order communicative-state modeling.

ElevenLabs

Latest: v3 / v3-Alpha (2025–2026). The widest commercial footprint in the space, with highly expressive emotional tags for generation. Their generation-side understanding of paralinguistic signals appears far more mature than any public evidence of input-side understanding.

Cartesia

Latest TTS: Sonic-3 (late 2025). Built on State Space Models (Mamba) for sub-90ms latency and constant-time inference which is ideal for always-on, on-device processing. In June 2025 they added Ink-Whisper for ASR: a fine-tuned Whisper, not a novel architecture. Given their SSM research roots, the open question is whether future Ink models will bring the same paradigm-level rethinking to ASR that Mamba brought to TTS.

Fish Audio

Latest: Fish Speech S2 (March 2026). Open-sourced VQGAN-based encoder with a Qwen3-4B autoregressive decoder, achieving high accuracy and sub-150ms latency. Purely a generation company; no paralinguistic input work published.

OpenAI

Voice mode (GPT-4o-Audio) is the most widely deployed end-to-end system. Despite the E2E framing, observable behavior still shows misread sarcasm and poor pragmatic inference, trailing specialized SER models on emotion classification.

Kyutai

Moshi (Sept 2024) achieved true full-duplex interaction. However, its ‘Inner Monologue’ mechanism predicts time-aligned text tokens before audio tokens, meaning the paralinguistic bottleneck still applies.

Google DeepMind

Gemini multimodal stack processes audio natively. Despite having the largest proprietary training corpus and compute, publicly observable products still exhibit the same failure modes: misread tone and no demonstrated communicative-state modeling.

StepFun

Step-Audio 2 (July 2025) is genuinely end-to-end with a continuous latent space encoder, raw waveform in, and no tokenization step. Their EditX work specializes in iterative paralinguistic editing (exhales, chuckles, hesitations). They are doing the most explicit work on paralinguistic signal manipulation, even if still on the generation side. Of all the companies in this space, StepFun has been the most audacious in rethinking the model architecture from first principles, and they may be the closest public example of a stack that could incidentally support the subtrace this input-understanding problem actually requires.

What all of these companies share is hard-won knowledge about the paralinguistic layer on the output side. Nobody has solved it on the input side. Hume AI came closest commercially, training production-grade emotion recognition models to modulate response generation — but their framework reduces to categorical emotion labels rather than a communicative state model, and with the core engineering team reportedly now at DeepMind, it is unclear whether that earlier momentum remains concentrated there.

StepFun’s EditX work demonstrates that RL can teach a model to represent paralinguistic signals with enough fidelity to reproduce them. The same representational infrastructure, pointed at incoming audio, is the closest the field has come to the input understanding layer this article describes.

The Missing Layer: Audio-Grounded Intent

What doesn’t exist and should: an intermediate representation between raw audio and text that preserves the signals humans actually use to understand each other. This layer would capture confidence trajectories (how certainty shifts within a single utterance), emotional dynamics (state changes during conversation, not just point-in-time labels), turn-taking signals (pause as invitation vs. thought), cultural communication patterns, and cognitive load indicators (speech rate, filler frequency, self-corrections, breathing patterns).

The Industry Thinks the Gap Is Emotion. It’s Not.

Emotion classification is the paralinguistic equivalent of keyword spotting. Labeling an utterance “happy” or “angry” tells you almost nothing about what happens next. The best specialized SER models achieve 66–78% accuracy; even 100% wouldn’t solve the problem, because knowing someone sounds angry doesn’t tell you why, what they’ll do, or what they need right now.

The real gap is pragmatic inference. What’s needed is a communicative state model: epistemic state (what the speaker believes right now, how certainty shifts), pragmatic intent (what they’re trying to accomplish by saying this in this way), the unsaid (the pause before an answer, the breath suggesting they almost said something else), and model of the listener (are they adjusting because they think I don’t understand?). Emotion classification alone does not get you there.

The Hard Epistemological Problem

Humans often don’t understand their own intent. A customer doesn’t always know whether they’re going to buy. Intent is often constructed after the fact.

This helps explain why emotion recognition systems often plateaus at 66–78% accuracy on benchmark settings. The labels are inherently noisy because the thing being labeled isn’t objectively accessible. Audio alone is fundamentally insufficient. If faces are insufficient without context, audio-only clips are even more impoverished. Real communication is vastly ambiguous.

What actually works? Interpretive models that are useful without being “true.” A skilled therapist doesn’t discover a patient’s objective intent — they construct a working model that changes behavior. AI should do the same. Not a single label. Not pure behavior prediction. Ranked working hypotheses.

The Technical Path Forward

The fix isn’t better emotion labels. It’s a different architecture entirely.

First, outcome-grounded representation learning. Stop training on subjective emotion labels. Train on observable outcomes: the customer renews, the deal closes, the student passes.

Second, multi-hypothesis state modeling. A single label is always wrong. The architecture needs to output a weighted distribution across interpretive frameworks — ranked working hypotheses, just like a human listener.

Third, a parallel integration architecture. ASR handles the words. A parallel path handles the communicative state. Both get passed to the LLM. You don’t evaluate this on classification accuracy. You evaluate it on intervention: did the system’s model lead to a better response?

Why This Matters

Sales: surfaces “the buyer’s interest dropped when you mentioned timeline.” Healthcare: contextualizes vocal biomarkers within conversational dynamics. Customer experience: identifies when frustration shifted to resignation, the moment you’ve lost them. Education: detects confusion from prosodic signals before a student articulates it.

We’ve built AI that can hear. We’ve built AI that can think. We’ve built AI that can speak. What we haven’t built is AI that can listen. The companies that build the layers bridging perception to inference — preserving signal through the translation instead of flattening or discarding it — will create the most durable value in voice AI.

The Byte is The AI Collective’s insight series highlighting non-obvious AI trends and the people uncovering them, curated by Josh Evans and Noah Frank. Questions or pitches: [email protected].

🤝 Thanks to Our Premier Partner: Roam

Roam is the virtual workspace our team relies on to stay connected across time zones. It makes collaboration feel natural with shared spaces, private rooms, and built-in AI tools.

Roam’s focus on human-centered collaboration is why they’re our Premier Partner, supporting our mission to connect the builders and leaders shaping the future of AI.

Experience Roam yourself with a free 14-day trial!

🫵 Do You Belong on Our Newsletter?

Share your message with the world’s largest AI community. To inquire about partnership availability, reach out to our team below.

The AI Collective is a community of volunteers, made for volunteers. All proceeds directly fund future initiatives that benefit this community.

Before You Go…

💬 Join Slack: AI Collective

🧑💼 LinkedIn: The AI Collective

📸 Instagram: The AI Collective

𝕏 Twitter / X: @_AI_Collective

Get Involved in Your Community

Thank you to the thousands of volunteers around the world who make this work possible. We truly could not do this without you.

About Carol Zhu

Carol Zhu is CEO and Founder of DecodeNetwork.AI, building at the intersection of AI, psychology, and human connection. She previously launched products at AWS AI (SageMaker), TikTok Shop (founding PM), and Credit Karma — building systems that predict what users want, and thinking hard about what that means. She writes on substack and X about the gaps between what AI can measure and generate, and what humans actually perceive.