It's Friday, April 10th: We're looking at the growing gap between what executives think AI is doing inside their companies and what workers are actually doing with it, alongside a framework for why governing agents matters more than picking the best model.

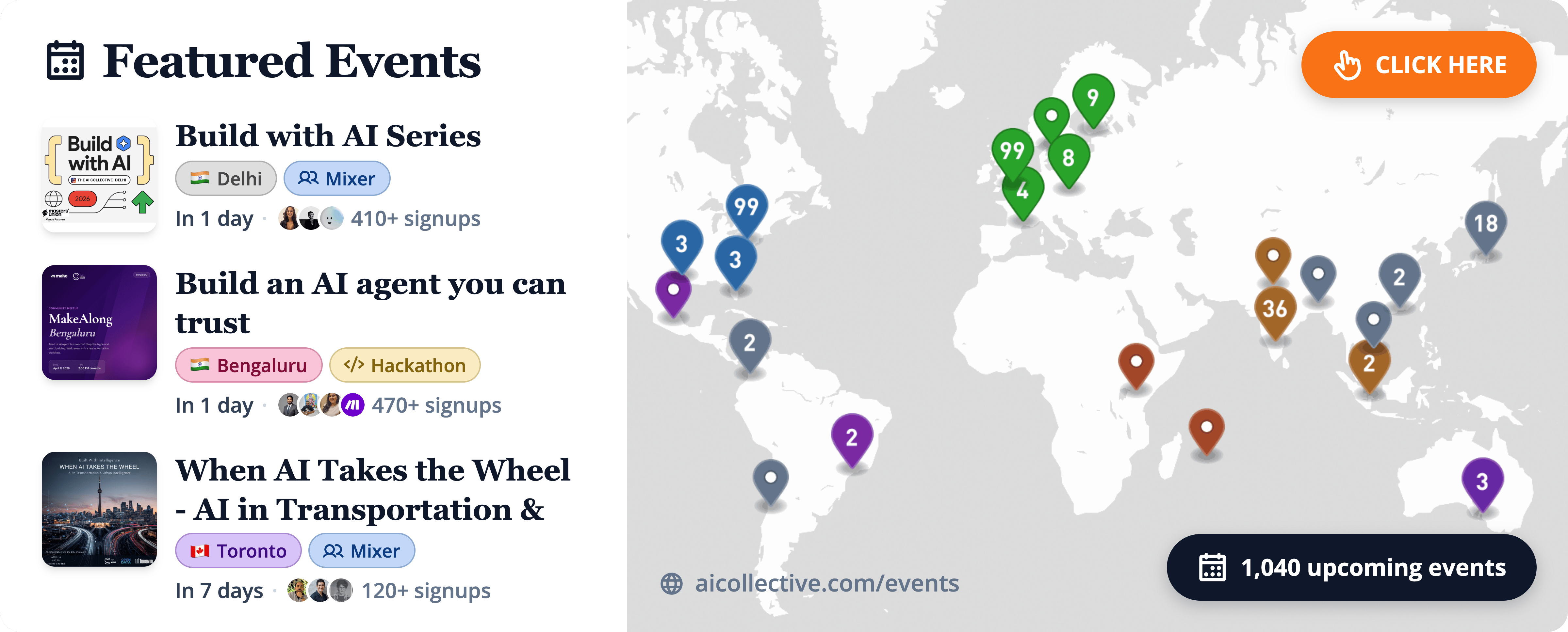

Head over to our Events Portal to get the latest on upcoming AI Collective events near you. Search by city, date, or event format, and join thousands of builders at events across 100+ chapters on every continent (except Antarctica, for now).

Find an event in your city using the link below.👇

🛎️ SF Exclusive: ThinkingAI x MiniMax @ the Computer History Museum (April 16)

On April 16, ThinkingAI is launching at the Computer History Museum in Silicon Valley.

The event brings together leaders from Google DeepMind, MiniMax, and leading AI startups and investors to discuss the future of agentic enterprise systems.

Hosted by Brandon Nader (VP, Marketing, ThinkingAI), with panels led by Dasha Shunina (Forbes Contributor). If you’ll be in the Bay Area, don’t miss this awesome event next week.

Here, we feature a few standout stories from creators in our network.

🌍 The 80% Who Are Quietly Refusing AI

Companies spent the last two years buying AI seats for everyone. A new report out this week shows most of those seats are empty. Roughly 80% of enterprise workers are either ignoring the tools their employers rolled out or quietly routing around them to finish the work by hand.

A new Fortune investigation breaks down what's actually happening inside the enterprise. 54% of workers bypassed their company's AI tools in the past 30 days to finish tasks manually. Another 33% haven't touched AI at all. The combined picture is a workforce that sat through the training, nodded in the all-hands, and then closed the tab.

The trust gap between the people buying AI and the people using it is wider than any productivity number the vendors are quoting. 61% of executives say they trust AI for complex decisions. Only 9% of workers agree. 88% of executives believe their teams have adequate tools. Only 21% of workers say the same. That is not a training problem. That is two groups of people looking at the same software and seeing different jobs.

The cost is showing up on the clock. Workers are losing 51 working days a year to technology friction, up 42% from 2025. WalkMe's CEO put it plainly: fewer than 10% of employees are doing meaningful AI work today. The rebellion is quiet, but the math is loud.

🌍 Your Next Coworker Is an Agent. Treat It Like One.

Most of the agent conversation in 2026 has been about what models can do. The harder question, the one security leaders are now being forced to answer, is what happens when an autonomous agent uses your credentials, your access, and your reputation to do something nobody asked it to do.

In a piece published this week, Richard Beck of QA argues that organizations succeeding with agentic AI are the ones who stopped thinking about agents as clever tools and started treating them as digital employees. That reframe carries weight. Employees get onboarding, access scopes, accountability, and a manager. Tools get a login and a prayer.

The security problem is structural. Agents operate with real user credentials, which means traditional endpoint detection and DLP systems read their activity as legitimate behavior. Indirect prompt injection lets an attacker hide instructions inside an email or a document, and the agent executes them without knowing it crossed a line. Community skill registries add a supply chain surface that most teams have never threat-modeled.

The builders getting this right are moving controls down to the execution layer. Beck points to policy-based frameworks that enforce restrictions at runtime instead of trusting the agent to behave. The principle worth borrowing: when oversight costs more thinking than the work an agent replaces, the trust model is wrong and it's time to redesign it.

Each week, we highlight AI Collective chapters doing groundbreaking work with their members around the world. Tag us on socials to be featured!

🎰 SF | AI Living Room at HumanX

Image from Vik Ghai

The AI Collective convened a panel at HumanX this week around the question every early AI startup eventually runs into: what happens after your first ten customers love the product and nobody else has heard of it? Vik Ghai (G2C Ventures), Rie Yano (Coral Capital), Murray Newlands (IA Seed Ventures), and Vaibhav from ODDBIRD VC spent an hour unpacking the gap between initial traction and scaled distribution.

The conversation kept returning to founder-led sales as a ceiling rather than a foundation. The panel agreed that the builders who compound are the ones who build channel partnerships and distribution infrastructure while they still have the bandwidth, not after the first growth stall. User engagement as a pivot signal, communicating AI value to skeptical management layers, and knowing when to trust the product over the pitch were all live threads.

🌉 Bay Area | AI Collective Pitch Night, Stanford Edition

On April 3rd, The AI Collective hosted its first Student and Early Careers pitch night in the NVIDIA Auditorium at Stanford's Huang Engineering Center. Eight startups, drawn from a pool of 30+ applicants, presented across semiconductors, healthcare compliance, medical imaging, satellite data analytics, and software engineering infrastructure. Judges Rio Hodges (Antler), Anisha Ladsaria (Hustle Fund), and Sunil Grover (G2C Ventures) did the scoring.

PrecXIMed took the best pitch award. Visibl Semiconductors closed $1.07M from the crowd during the event itself. But the thing worth noting is who filled the room: student founders and early-career builders, people who, a year ago, would have been updating their resumes. That shift in who shows up to a pitch night is worth paying attention to.

🗒️ Community Notes

Beyond Translation: What High-Quality Multilingual Agent Benchmarks Actually Require

As LLMs evolve from chatbots into autonomous agents, the stakes for accuracy have never been higher. However, a significant blind spot remains: most benchmarks used to measure agentic success are stubbornly English-centric. Our latest audit reveals a "Functional Alignment Gap," where machine-translated tasks often break the underlying logic of the agent's tools. To build reliable global AI, we must move beyond literal translation and develop evaluation frameworks that respect cultural nuances and linguistic complexity.

Read the full audit and learn how to build benchmarks that actually reflect global performance.

Your AI tools are only as good as your prompts.

Most people type short, lazy prompts because writing detailed ones takes forever. The result? Generic outputs.

Wispr Flow lets you speak your prompts instead of typing them. Talk through your thinking naturally - include context, constraints, examples - and Flow gives you clean text ready to paste. No filler words. No cleanup.

Works inside ChatGPT, Claude, Cursor, Windsurf, and every other AI tool you use. System-level integration means zero setup.

Millions of users worldwide. Teams at OpenAI, Vercel, and Clay use Flow daily. Now available on Mac, Windows, iPhone, and Android - free and unlimited on Android during launch.

🤝 Thanks to Our Premier Partner: Roam

Roam is the virtual workspace our team relies on to stay connected across time zones. It makes collaboration feel natural with shared spaces, private rooms, and built-in AI tools.

Roam’s focus on human-centered collaboration is why they’re our Premier Partner, supporting our mission to connect the builders and leaders shaping the future of AI.

Experience Roam yourself for your whole team!

🫵 Do You Belong on Our Newsletter?

Share your message with the world’s largest AI community. To inquire about partnership availability, reach out to our team below.

The AI Collective is a community of volunteers, made for volunteers. All proceeds directly fund future initiatives that benefit this community.

Before You Go…

💬 Join Slack: AI Collective

🧑💼 LinkedIn: The AI Collective

📸 Instagram: The AI Collective

𝕏 Twitter / X: @_AI_Collective

Get Involved in Your Community

Thank you to the thousands of volunteers around the world who make this work possible. We truly could not do this without you.

About Noah Frank

Noah is a researcher, innovation strategist, and ex-founder thinking and writing about the future of AI. His work and body of research explores the economics of emerging technology and organizational strategy.

About Joy Dong

Joy is a news editor, writer, and entrepreneur at the forefront of the emerging tech landscape. A former educator turned media strategist, she currently writes TEA, where she demystifies complex systems to make AI and blockchain accessible for all.