Upcoming Events

Tue, May 28th: 🧠 GenAI Collective 🧠 Research Roundtable 👩🔬👨🔬

Thu, May 30th: 🧠 GenAI Collective 🧠 SF Demo Extravaganza 🚀

Wed, June 5th: 🧠 GenAI Collective NYC x NY Tech Week 🧠 AI in the Big Apple

🗓️ Hungry for even more AI events? Check out SF IRL, MLOps SF, or Cerebral Valley’s spreadsheet!

If you didn’t get a chance to listen to our most recent episode of the Collective Intelligence Community Podcast, go listen to our very own Thomas Joshi’s interview with Prasanna Ganesan, CEO of the AI platform for operationalizing healthcare data Machinify. In this episode you’ll learn how AI companies can catch cost curves early to deliver products cheaper than competitors. In addition, companies will learn how to craft their go to market in order to prove the efficacy of their AI platform to large customers in high stakes environments like healthcare.

The Rise of LLMs is a Watershed Moment for Cybersecurity

The current enterprise cyber model is broken

I started my career as a cyber defense consultant at Accenture building enterprise Security Information and Event Management (SIEM) systems. This requires deploying an agent on all enterprise systems and appliances and integrating with virtually all enterprise security tools (Endpoint Security, Cloud Security, Application Security, Identity & Access Management, Network Proxy, and the list continues). Security teams then have to manually write hundreds of detection rules that bubble up anomalies to proactively send alerts to a security operations center (SOC) for investigation. It’s a continuous cat and mouse game that can cost >$1M per year and security teams struggle to both write detection rules and respond to alerts fast enough to protect themselves against emerging threats.

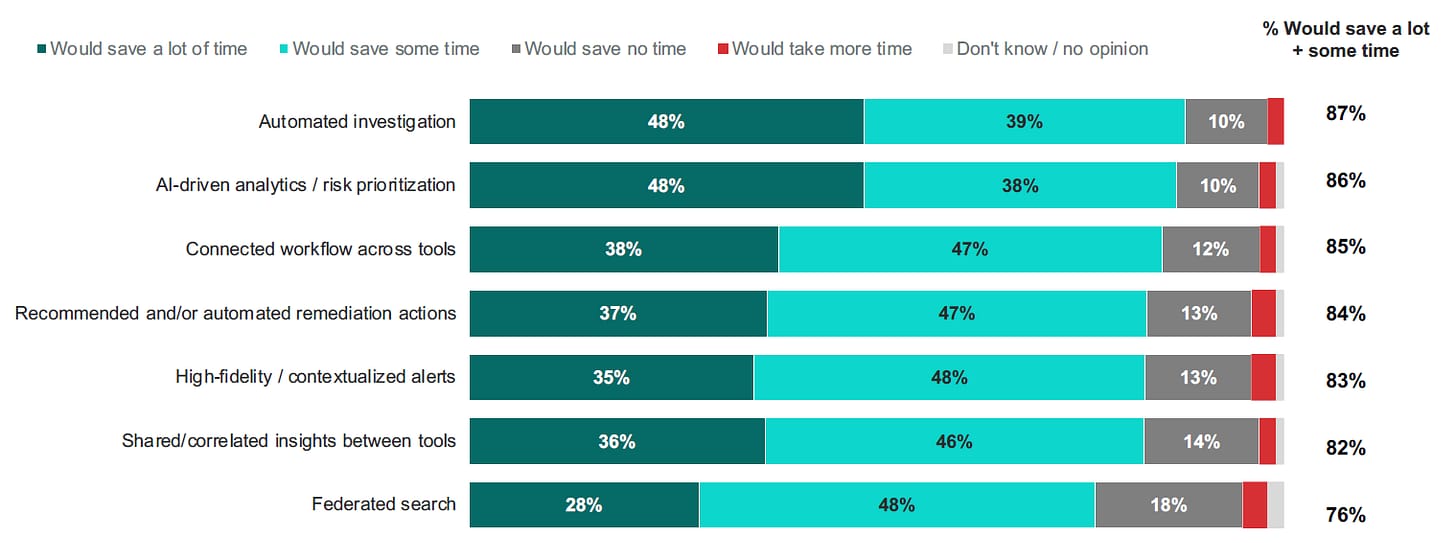

Today, both the detection and response processes are highly manual and often require offshore labor through managed security service providers like Accenture or IBM to meet the sheer volume of alerts. According to Vectra’s 2023 report, SOC teams receive 4500 alerts per day, which translates to over $3B of annual labor spend. Even with this outsized spend, the same report found that SOC teams are unable to deal with over two-thirds of these alerts, creating an outsized and unsolved gap in enterprise security programs. Further, the gap between the number of skilled cyber professionals needed and available rose 12.6% globally to hit a record of nearly 4 million. This dislocation leads security teams to often be overworked, under-resourced, and under-trained, which limits their ability to keep up with attackers. The World Economic forum predicts the costs of cybercrime to hit $20T+ globally by 2027, up nearly 4x from 2021.

As companies continue to digitize and build complexity in their infrastructure and IT stack, the attack surface will grow and further exacerbate the need for generative tools and automation. Security operations is just one example of a current challenge that is ripe for disruption with generative AI, so let’s dive into how LLMs can solve challenges across the cybersecurity landscape.

The potential of LLMs in cyber!

The adoption of large language models (LLMs) and GenAI apps is a watershed moment for the cybersecurity industry that is long overdue for AI-enabled disruption. The world has already tasted how generative agents and tools could create a step function increase in productivity across other areas of the enterprise from software development to customer service, and many will argue cybersecurity could see the greatest value given the criticality of speed and accuracy. Let’s dive into some of the early cybersecurity areas that are already seeing signs of disruption:

Security Monitoring & Threat Detection: LLMs excel in gathering, correlating, and analyzing data from large datasets to classify the source and criticality of the incident while maintaining business context. Companies like Anvilogic or Radiant Security ingest data across disparate security tools such as network logs, identity providers, and threat intel feeds, to elevate the alerts with the highest level of criticality. They can also suggest monitoring gaps and proactively recommend new detection rules to mitigate emerging risks. This enables organizations to enhance detection speed and accuracy, which is crucial for staying ahead of evolving cyber threats.

Incident Response & Investigation: Automating incident response with LLMs drastically reduces the time needed to identify the cause of a breach, building IR playbooks, and responding to the alert. New entrants like Prophet Security or Dropzone AI can synthesize complex alerts from disparate tools into plain English and automate remediation. By analyzing historical incident data, LLMs can also predict future attacks and simulate hypothetical scenarios, empowering organizations to proactively strengthen their cyber defenses and minimize the impact of breaches.

Security Awareness & Training: LLMs can analyze an employee's job role, risk level, and security knowledge to help deliver individualized training materials that are relevant and insightful, while capturing real-time feedback. Large security awareness and training incumbent KnowBe4 announced a GenAI-enabled product that can proactively select security training modules or simulate phishing attacks that are hyper-personalized to the specific user. The versatility of LLMs in adapting training content to different employee personas and experiences enhances overall security awareness, enabling organizations to mitigate the growing risks of highly-personalized and more advanced social engineering campaigns.

Cybersecurity Compliance: Leveraging LLMs for compliance tasks automates mundane processes, such as report summarization and gap analysis, freeing up resources for more critical operations. Additionally, LLMs can assist in mapping controls across the increasing number of data privacy and security regulations, ensuring organizations maintain regulatory compliance while optimizing operational efficiency.

Data Security: LLMs can classify sensitive data types (IP, financial models, engineering designs) with human-level accuracy. Instead of relying on manual review or superficial markers such as title, document type, or file size, innovators like Harmonic Security have built a large classification model trained on a huge corpus of public data/documents to provide more granular visibility and controls for sensitive data (e.g. a user is exfiltrating a investment memo or sensitive design review). They also allow security teams to write data loss prevention (DLP) rules in plain English, which decreases the time and complexity for security teams to build out controls.

Beware of the risks!

Although LLMs will transform cyber protection, there are serious risks of adversaries using this technology to increase the efficacy of their attacks. Malicious actors are already using LLMs to automate reconnaissance, enhance malware scripting, find and exploit vulnerabilities, and create hyper-personalized social engineering campaigns. Microsoft and OpenAI released a report in February 2024 that documented some of the LLM-based tactics they’ve already responded to from a number of nation state threat actors like Forest Blizzard (Russia) and Emerald Sleet (North Korea).

Generative AI is poised to revolutionize cybersecurity, addressing key challenges in detection, response, and training while introducing new risks from malicious actors wielding similar tools. Will the cybersecurity industry leverage LLMs to stay ahead, or will it succumb to an AI-fueled wave of more sophisticated attacks?

With that, I hope you enjoyed the newsletter and would love to hear how the community sees the future of cybersecurity being transformed by LLMs. If you would like to share your thoughts, hit up Eric on the community Slack, or reach out via email at [email protected] for a feature in our next newsletter! I am also excited to announce that we are looking for one of the best and brightest to join the newsletter as a content creator. Reach out if you’re interested in joining!

Events Recap

A couple of Wednesdays ago we hosted our first event in collaboration with Girls in Tech at the SVB Experience Center! We had an incredible turnout for our star-studded lineup of keynote speakers and panelists as we talked about diversity and equity in tech! 🫶

Every couple of months we host town hall events – a casual online forum to share what the GenAI Collective has been up to and how you can get involved! Questions, comments, or concerns about anything related to the community? Look out for the next one in late June! 😄

Value Board

Join the GenAI Collective team!

Are you passionate about AI, meticulous about details, and a wizard at organizing events? The GenAI Collective is on the lookout for an Events Leader to bring our exciting events to life! This is your chance to dive deep into the AI community, enrich your network, and showcase your skills. If you’re ambitious, organized, and ready to make a splash in the AI scene, we want to hear from you. Let’s create unforgettable events together! Reach out at [email protected] 😄

About Eric Fett

Eric leads the development of the newsletter and online presence. He is currently an investor at NGP Capital where he focuses on Series A/B investments across enterprise AI, cybersecurity, and industrial technology. He’s passionate about working with early-stage visionaries on their quest to create a better future. When not working, you can find him on a soccer field or at a sushi bar! 🍣