Upcoming Events

Wed, Nov 1st: 🗣️ AI Salon x GenAI Collective 🧠 Human Flourishing

Thu, Nov 16th: 🧠 GenAI Collective 🧠 Doors to Beyond 🌌

Tue, Nov 21st: 🧠 GenAI Collective 🧠 Virtual Friendsgiving! 🦃

The Next Frontier of Generative AI is on the Edge

Recent developments within Generative AI and the edge computing ecosystem is opening avenues for real-time, on-device processing. The shift towards the edge for Generative AI is a tactical move to bypass the constraints of centralized cloud infrastructure, particularly in scenarios demanding low latency and stringent privacy. Here’s an exploration into some of those development and why we believe the future of Generative AI will happen on the edge:

1. NVIDIA Unveils the Jetson Generative AI Lab

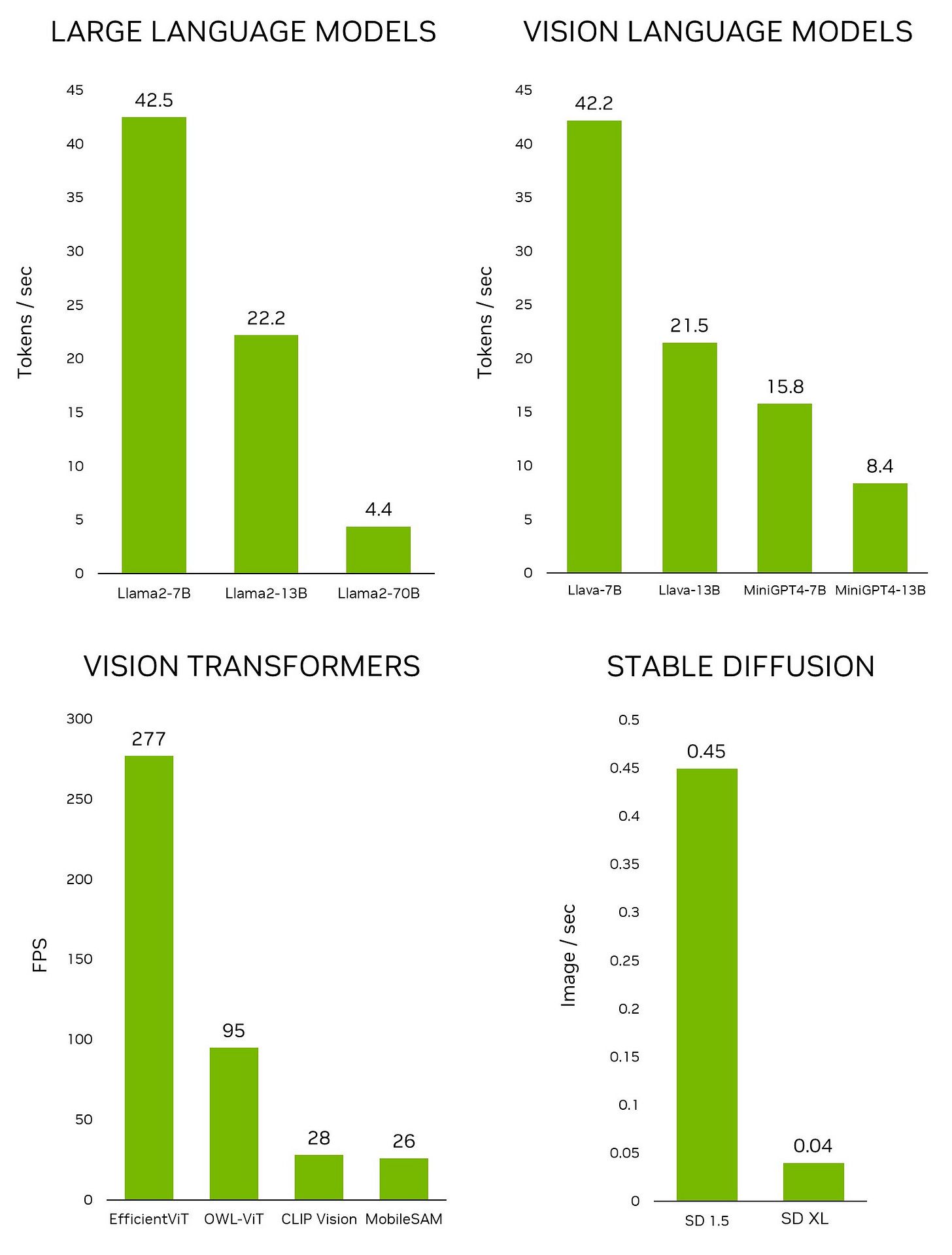

Two weeks ago, NVIDIA announced major expansions to two frameworks on its Jetson Platform for edge AI and robotics. These updates include a new Jetson Generative AI Lab for developers to use with the latest open-source generative AI models, and the Isaac ROS 2.0 robotics framework entering general availability. NVIDIA also released their TAO Toolkit to help developers create efficient and accurate AI models for edge applications. TAO features a low-code interface to allow users to fine-tune and optimize vision AI models, including ViT and vision foundational models. Why is this important? Incumbents are proving that they are prioritizing building the necessary software tools to support edge AI innovation. Some early benchmarks below for NVIDIA’s Jetson AGX Orin toolkit:

Inferencing performance of leading Generative AI models on Jetson AGX Orin

2. Generative AI powering the next-gen IoT Ecosystem

The number of connected devices globally has exploded in the last 5 years and developers are excited about the future of Generative AI within the IoT ecosystem. Generative AI, intertwined with edge computing and IoT, utilizes cloud-generated deep learning data for on-edge model inference and predictions using sensor data. This can bring many benefits including data security, power efficiency, real-time intelligence, and greater reliability, to name a few. Current use cases include predictive maintenance, industrial quality inspection, and autonomous driving are poised to be revolutionized by these developments.

3. Embracing a Hybrid Edge Architecture

Qualcomm has been leading the charge on building content around adopting a hybrid AI architecture and on-device AI. A hybrid AI architecture distributes and coordinates AI workloads among cloud and edge devices, rather than processing in the cloud alone. The cloud and edge devices — smartphones, cars, personal computers, and Internet of Things (IoT) devices — work together to deliver more powerful, efficient and highly optimized AI. By leveraging the edge devices' compute capabilities, on-device AI can ensure performance, personalization, privacy, and security. AI models with more than 1 billion parameters are already running on phones with performance and accuracy levels similar to those of the cloud, and models with 10 billion parameters or more are slated to run on devices in the near future.

4. 5G as a Catalyst for Edge AI

The emergence of 5G and IoT technologies is propelling innovation within AI, augmenting its effectiveness with localized decision-making and near real-time data. The combination of 5G's low latency and faster processing with edge architectures is crucial for applications demanding quick decision-making and responsiveness. In healthcare, for instance, AI-edge-supported medical devices like laparoscopes are providing real-time insights to surgeons, enabling faster, life-saving decision-making.

The move of Generative AI to the edge reflects a broader shift towards real-time, on-device processing, addressing latency and privacy concerns. This evolution is set to unlock new possibilities across various industries, and we at the Gen AI collective are excited to follow this ecosystem as it develops!

As always, we are committed to elevating unique perspectives from the community. If you have any topics or insights you would like to share, hit up Eric on the community Slack or reach out via email at [email protected] for a feature in our next newsletter.

Events Recap

Thank you to everyone who joined us for our first virtual event with OctoML! We had hundreds of questions from the community and enjoyed discussing topics around getting started with LLMs, key challenges, and practical applications.

We also want to thank our friends at Rocketship VC for an amazing AI Blastoff Summit! The panelists were stellar, and we enjoyed exploring how the team leverages a data-driven approach to selecting early-stage winners!

About Eric Fett

Eric joined The GenAI Collective in early September to lead the development of the newsletter. He is currently an investor at NGP Capital where he focuses on Series A/B investments across enterprise AI, cybersecurity, and industrial technology. He’s passionate about working with early-stage visionaries on their quest to create a better future. When not working, you can find him on a soccer field or at a sushi bar! 🍣