It's Wednesday, March 11th: Anthropic shipped multi-agent code review built from their own internal process. Andrew Ng open-sourced a doc hub that stops coding agents from hallucinating APIs mid-task, and Google released an embedding model that finally treats text, images, video, and audio as one unified memory space.

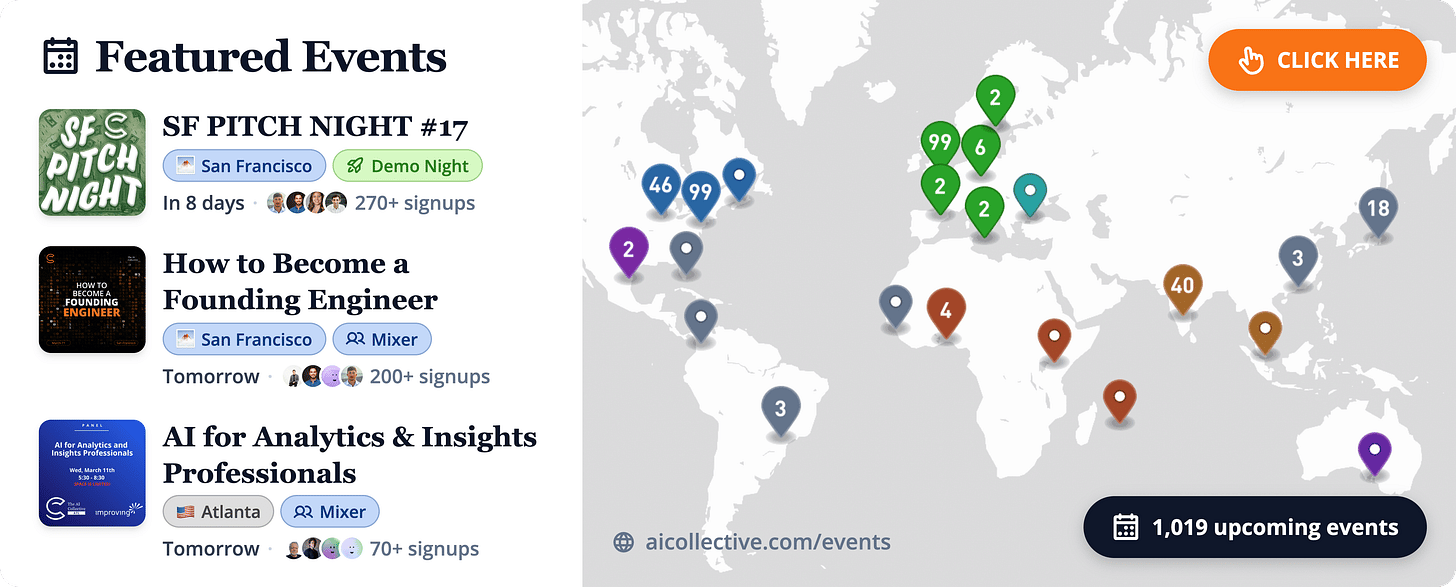

Head over to our Events Portal to get the latest on upcoming AI Collective events near you. Search by city, date, or event format, and join thousands of builders at events across 100+ chapters on every continent (except Antarctica, for now).

🌁 Based in SF? Check out SF IRL, MLOps SF, GenerativeAISF, or Cerebral Valley’s spreadsheet for more!

In Today’s Top Tools, we spotlight some of the most innovative, creative AI apps that we recommend adding to your stack.

1️⃣ Anthropic brings code review to Claude Code

Code output per Anthropic engineer has grown 200% in the last year, and code review became the bottleneck. Claude Code now ships a multi-agent review system modeled directly on the one Anthropic runs internally, dispatching a team of agents on every PR to catch the bugs that quick skims miss. Before deploying this internally, 16% of PRs got substantive review comments. Now 54% do. It’s built for depth, not speed, and is available now as a research preview for Team and Enterprise plans.

How it works:

Every time a PR is opened, Code Review spins up a coordinated team of agents.

Agents search for bugs in parallel, verify findings to filter false positives, and rank issues by severity

Results land as a single high-signal overview comment plus inline annotations for specific bugs

Reviews scale with PR size: large, complex changes get more agents and a deeper pass; trivial diffs get a lightweight sweep

Average review time is ~20 minutes; average cost is $15–25, scaling with PR complexity

What it catches:

Anthropic’s internal numbers show how much it surfaces on real codebases.

On large PRs (1,000+ lines): 84% get findings, averaging 7.5 issues per review

On small PRs (under 50 lines): 31% get findings, averaging 0.5 issues

Less than 1% of findings are marked incorrect by engineers

In one case, a one-line production change that would have silently broken authentication was flagged and fixed before merge

How to get started:

Code Review is available now in beta for Team and Enterprise plans.

Admins enable it in Claude Code settings, install the GitHub App, and select which repos to run reviews on

Once enabled, reviews run automatically on new PRs — no per-developer configuration required

Monthly org spend caps, repo-level toggles, and an analytics dashboard give admins full cost visibility

2️⃣ Chub gives coding agents curated, versioned docs

Coding agents hallucinate APIs because they’re searching the open web for docs that may be outdated, incomplete, or just wrong. Context Hub, released by Andrew Ng, is an open-source CLI tool that gives agents a curated, versioned doc layer instead, so they fetch exactly what they need, annotate gaps locally, and improve with every session. All content is maintained as markdown in the public repo, so you can inspect exactly what your agent reads and contribute fixes back.

How it works:

Chub is designed to be used by your agent, not by you directly.

chub search "openai" — finds available docs and skills across the registry

chub get openai/chat --lang py — fetches the versioned, curated doc your agent actually needs

Prompt your agent to use it, or drop a SKILL.md into ~/.claude/skills/ to wire it in automatically for Claude Code

Self-improving agents:

The real value is what happens after the first fetch.

Annotations: Agents attach local notes to docs when they discover gaps (e.g., “needs raw body for webhook verification”) , these persist across sessions and appear automatically on future fetches

Feedback: Agents vote docs up or down; ratings flow back to maintainers to improve content for the whole community

Incremental fetch: Docs can span multiple reference files: agents pull only what they need, no wasted context tokens

Why it matters for builders:

This closes one of the most annoying failure modes in production agentic systems.

Eliminates the “noisy web search → broken code → manual fix → repeat next session” loop

Versioned, language-specific docs mean your agent is reading the same source of truth as your team

Open and community-maintained: any API provider, framework author, or developer can contribute

3️⃣ Google releases Gemini Embedding 2

Every existing embedding model forces you to pick a modality. Gemini Embedding 2 throws that tradeoff out: it’s the first model that maps text, images, video, audio, and PDFs into a single unified embedding space natively. That means your retrieval pipeline, memory layer, or RAG system can reason across all content types at once, without stitching together separate encoders or running transcription as a preprocessing step.

What it supports:

The model handles the full range of inputs modern agents actually encounter.

Up to 8,192 text tokens per request, across 100+ languages

Up to 6 images per request, with interleaved image + text inputs in a single call

Up to 120 seconds of video ingested natively

Audio ingested directly, no transcription pipeline required

PDFs up to 6 pages

Architecture:

Two design choices make it practical to deploy at different scales.

Matryoshka Representation Learning (MRL): Flexible output dimensions at 3072, 1536, or 768 : choose the precision vs. cost tradeoff that fits your use case

Unified semantic space: Cross-modal retrieval works out of the box, so a text query can surface a relevant video clip or image without any special handling

Why it matters for agent builders:

A unified embedding space is effectively a true memory layer for multimodal agents.

Agents can store and retrieve memories across all content types , not just text, in a single vector store

Semantic intent is preserved across modalities, so “find the part where they discuss pricing” works whether the source is a PDF, a recording, or a slide deck

Available now in public preview via the Gemini API and Vertex AI

Here are a few standout opportunities from companies building at the edge of AI. Each role is selected for impact, growth potential, and relevance to our community.

Software Engineer, Clicks, San Francisco ($120K – $200K - 0.50% – 2.00%): “Build and optimize agentic AI capabilities and core infrastructure for a YC-backed startup that gives every AI agent its own computer — letting it perceive screens and act through mouse and keyboard to fully automate back-office work for companies worldwide.”

Software Engineer, Voice AI, Lightberry, San Francisco ($150K – $225K - 0.25% – 1.00%): “Improve composite voice pipelines, knowledge storage and retrieval, and latency-sensitive cloud systems that make humanoid robots — including those from the #1 humanoid robot manufacturer in the world — intelligently listen, speak, and interact with people in real time.”

Founding AI Engineer, Uplane, San Francisco ($150K – $200K - 0.50% – 1.50%): “Design and build scalable LangGraph-based AI systems and data analytics infrastructure that power an autonomous advertising autopilot — one that generates, tests, and optimizes campaigns end-to-end, replacing the patchwork of agencies and tools that waste billions in ad spend today.”

Staff Software Engineer, Idler, San Francisco ($300K – $400K - 0.75% – 2.00%): “Lead the design of scalable agentic systems that generate and QA reinforcement learning environments for frontier AI models — working directly with researchers at leading labs at a company that has already closed the largest training data contract a foundation lab has ever issued.”

Our Premier Partner: Roam

Roam is the virtual workspace our team relies on to stay connected across time zones. It makes collaboration feel natural with shared spaces, private rooms, and built-in AI tools.

Roam’s focus on human-centered collaboration is why they’re our Premier Partner, supporting our mission to connect the builders and leaders shaping the future of AI.

Experience Roam yourself with a free 14-day trial!

➡️ Before You Go

Partner With Us

Launching a new product or hosting an event? Put your work in front of our global audience of builders, founders, and operators — we feature select products and announcements that offer real value to our readers.

👉 To be featured or sponsor a placement, reach out to our team.

The AI Collective is a community of volunteers, made for volunteers. All proceeds directly fund future initiatives that benefit this community.

Stay Connected

💬 Slack: AI Collective

🧑💼 LinkedIn: The AI Collective

𝕏 Twitter / X: @_AI_Collective

Get Involved

About the Authors

About Joy Dong

Joy is a news editor, writer, and entrepreneur at the forefront of the emerging tech landscape. A former educator turned media strategist, she currently anchors TEA, where she demystifies complex systems to make AI and blockchain accessible for all. Joy is on a mission to explore how decentralized technology and artificial intelligence can be leveraged to build a more innovative and transparent future.

About Noah Frank

Noah is a researcher, innovation strategist, and ex-founder thinking and writing about the future of AI. His work and body of research focus on aligning governance strategies to anticipate transformative change before it happens.