Upcoming Events

🌁 SF Bay Area

Tue, Apr 15th: AI Showdown: Pitch, Compete, Win ✨ (w/ Alumni Ventures)

Thu, Apr 17th: Exploring Generative UI 📟 (w/ Thesys)

Thu, Apr 17th: Living Room Chats for AI Founders

Wed, Apr 23rd: Game Night! (Marin County)

🗓️ Hungry for even more AI events? Check out SF IRL, MLOps SF, or Cerebral Valley’s spreadsheet!

🗽 New York

Tue, April 29th: AI Frontiers: NYU Roundtable

🏛️ DC

Wed, Apr 16th: AI Insiders Roundtable

🔥 Austin

🇬🇧 London

Thu, May 8th: London Demo Night! 💂🏼♂️🚀

Future-Proofing Learning: Rethinking Education Systems in the Age of AI

Guest Article

A New Era for Higher Education

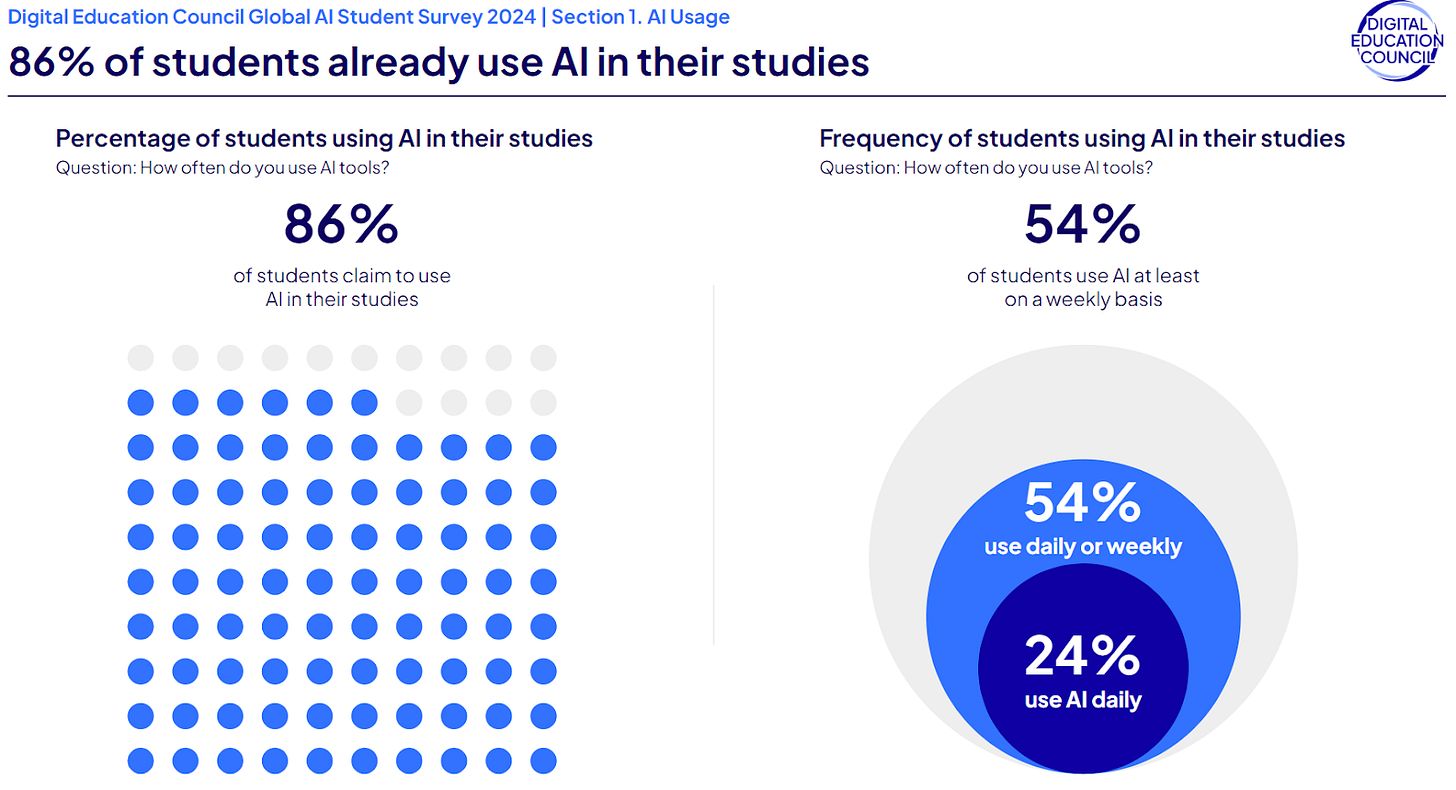

The use of AI tools by university students has surged across the globe. From the United States to Asia and beyond, learners are turning to generative AI systems like ChatGPT for help with essays, coding, and study support. AI is becoming entrenched in student's daily routines.

In one recent example, an assembly at a university in Asia found that of the few hundred students present had used ChatGPT, with 80% having done so in the past 24 hours. Contrastingly, a few weeks ago at Yale, students taking an intermediate-level CS course woke up to an ultimatum. In a single problem set, one-third of students’ work had “clear evidence of AI usage,” the course head said. They could either confess or risk a zero and be referred to the academic integrity committee with potential delays in final grades that could impact internship opportunities.

Clearly there is a disconnect between students, staff and the institutions themselves. The conversation has already shifted from “How do we stop students from using AI?” to “How can we teach students to use AI ethically and effectively?”.

As AI tools become more sophisticated and accessible, universities must navigate these challenges: If and how should AI be regulated in classrooms? Where should the line be drawn for usage? How do universities ensure consistent policies which equally affect all students? And most importantly, how can higher education institutions both adapt to the challenges and capitalize on the opportunities posed by AI in academia?

Inaccuracies in Detection, Unfair Bias and New Divides

The controversy over AI and integrity is further complicated by concerns that detection methods can introduce new forms of bias. AI-writing detectors (such as those integrated into plagiarism software) do not catch cheating perfectly – in fact, they frequently produce false positives, unfairly accusing students who haven’t used AI at all.

Studies have found that these tools disproportionately flag certain groups. Writing by non-native English speakers is more often mislabeled as “AI-generated” simply because it uses simpler vocabulary and grammar – one Stanford-led study showed detectors falsely marked 61% of essays by non-native English writers as AI-written.

Likewise, neurodivergent students (those with autism, ADHD, dyslexia, etc.) are prone to being misclassified by AI checkers due to atypical writing patterns or phrasing. These biases mean that English-as-second-language (ESL) and neurodivergent learners face a higher risk of unwarranted suspicion under AI scrutiny.

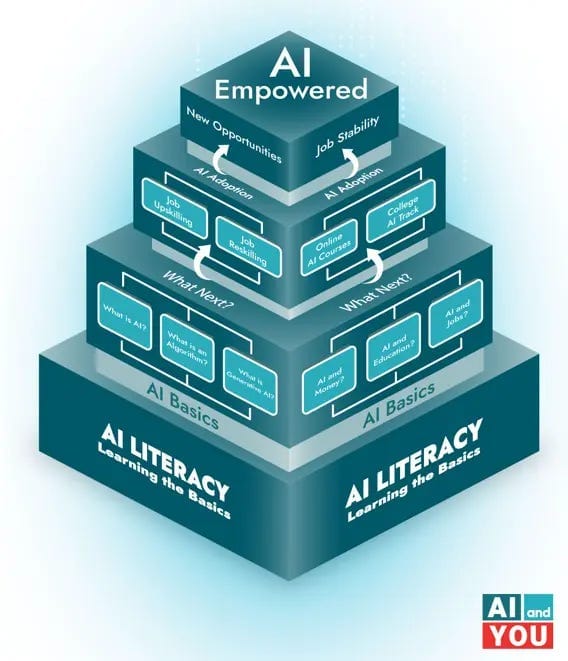

Beyond neurodivergence and language barriers, another concern looms: the digital divide. Students who are not AI-literate or lack access to the latest tools are already at a disadvantage.

Without targeted support, they are likely to fall further behind their peers who use AI to streamline their studies or deepen understanding. This imbalance could mirror—and exacerbate—existing socio-economic disparities in higher education. If left unchecked, AI may create a two-tier system: those who benefit from its tools, and those penalized by their absence.

The Assistive Power of AI

If AI is used correctly – but who can define correctly? – then it can help both students and staff be more efficient and productive. Lyssa Vanderbeek from Wiley, the global leader in publication, education and research says “academic integrity and cheating are not new concepts, they have been around a long time”. So how do we adapt to utilise this change to result in positive outcomes for staff and students?

A contributing factor to the divided opinions on the use of AI in education is that there is no agreed upon definition of cheating. This question opens a broader dialogue into what ethical ways of ‘receiving help’ look like - should it matter if the same advice comes from a teacher versus ChatGPT?

Rather than simply banning AI or leaving a free-for-all, many advocate for a middle path that treats AI as a tool – one that can be used ethically with proper disclosure and for the right purposes. UNESCO has created AI Competency Frameworks for students and teachers to help develop AI education strategies that are ethically informed, inclusive, adaptable and forward-looking. This means updating academic integrity definitions to include AI assistance, but also training educators to design assignments that focus on human skills (critical thinking, personal reflection, creative reasoning) which AI cannot easily fabricate.

For example, teaching students how to use AI as a learning aid to get feedback on a draft or explore a concept without crossing into academic dishonesty. It also means AI could be used to adapt to different learning styles, helping students individually in terms of their strengths and weaknesses. Providing resources (like workshops or modules on AI literacy) so that no student is left behind in the AI-enabled classroom is the key. The goal is to integrate AI in a way that upholds the spirit of learning and fairness, turning a challenge into an opportunity to modernize pedagogy.

From Grey Areas to Guidelines

Encouragingly, some higher education institutions have started pioneering creative frameworks for AI usage instead of relying on punishment alone. The University of Texas at Arlington recently introduced a four-tier system that categorises permissible AI use in courses—from complete bans to full integration with citation. Meanwhile, St. Pölten University in Austria launched the Higher Education Act for AI (HEAT-AI), a risk-based framework modelled on EU digital standards, which helps align AI use with educational values, privacy concerns, and institutional integrity.

By defining how to leverage AI and where to draw red lines, frameworks like these aim to protect the core values of education (honesty, rigor, equity) while still preparing students for a future where AI is ubiquitous.

Shaping a Future-Ready Education System

The question is not whether AI will change higher education—it already has.

Yes, there are valid fears: academic dishonesty, uneven policy enforcement, biases against certain student groups, and widened inequality. But there are also profound opportunities: personalized tutoring at scale, new ways to engage with material, and the chance to equip students with AI fluency as a career skill.

Some key reform suggestions include:

adopting institution-wide guidelines that clearly define how AI can be used in different contexts

training staff in both the risks and benefits of AI to ensure consistent application

developing support systems for students from underrepresented or digitally excluded groups—ensuring no one is left behind.

rethink assessment methods, moving beyond formats that AI can easily replicate toward tasks that emphasise human insight and originality

The path forward is neither to banish AI nor to blindly accept it, but to adapt our educational norms and systems to create a future-ready education system that benefits everyone.

Partner Spotlight: Snowflake

Anthropic’s Claude 3.5 Sonnet is now generally available in Snowflake Cortex AI. Join us for a hands-on lab to learn how to build retrieval-augmented generation (RAG) and agent-based AI applications using the Sonnet model within Snowflake Cortex AI’s secure environment. Expect live coding, real-world use cases, and an interactive demo to get you started.

What you’ll learn:

How to build AI-powered apps using Claude 3.5 Sonnet in Snowflake Cortex AI

Ways to implement RAG for accurate, grounded AI responses

Best practices for deploying AI securely with SQL, Python and REST APIs under Snowflake’s data governance model

Join us to explore the future of AI development — register now!

Partner Spotlight: AI Rabbit Hole 2025

We’ve partnered with AI Rabbit Hole 2025 — one of the most anticipated AI conferences of the year — happening April 24th in San Francisco.

This event will bring together founders, builders, investors, and AI leaders from OpenAI, Perplexity, Grammarly, M12, NASA, Stanford, and more.

As a member of our community, you get exclusive early access to heavily discounted tickets:

💥 50% OFF for the first 20 attendees — use code GAIC50💥 30% OFF for everyone after — use code GAIC30

If you’re building in AI, this is the room to be in.

Join the Community!

💬 Slack: GenAI Collective

𝕏 Twitter / X: @GenAICollective

🧑💼 LinkedIn: The GenAI Collective

📸 Instagram: @GenAICollective

We are a volunteer, non-profit organization – all proceeds solely fund future efforts for the benefit of this incredible community!

Our Premier Partners

Premier Partners are values-aligned leaders who invest in the future of AI by supporting the world’s most vibrant grassroots community. We thank them immensely for their ongoing support! 😄

About Jasmine Hasmatali

Jasmine Hasmatali is a previous founder, recent Master's graduate and Communications Director at Aurix. She holds a Masters of Law in Human Rights from the University of Edinburgh and is passionate about using her legal background to advocate for inclusive AI futures which benefit everyone. Her research is oriented towards bridging the gap between rights, technology and society. When not working you can find her with friends, at the gym, or travelling if she’s lucky.

About Noah Frank

Noah is the co-founder of Aurix and has spent his career both working at startups and advising global leaders on innovation strategy. His work and body of research focus on AI policy, anticipatory governance, and effective decision-making. When not working to make emerging tech work for all, you can find him making music with his band. 🎸