We are always trying to improve with feedback from our community. Help shape the next chapter of the AI Collective Community Newsletter by taking this 30 second pulse check!

Upcoming Events

🌁 SF Bay Area

Wed, May 28th: SF DEMO NIGHT 🚀 (w/ The GenAI Collective)

Wed, Jun 4th: 🧠 GenAI Collective SF | Special Mystery Event ✨

🗓️ Hungry for even more AI events? Check out SF IRL, MLOps SF, or Cerebral Valley’s spreadsheet!

🏙️ Chicago

Tue, Jun 24th: GenAI Collective Chicago: The Future of Work

🗻 Denver

Wed, Jun 11th: 🧠 GenAI Collective Denver 🧠 Discussion Meetup by Roam

🗽 New York

Fri, May 30th: Hacking Agents Hackathon NYC

Wed, Jun 4th: 🧠 GenAI Collective NYC | Special Mystery Event ✨

Thu, Jun 12th: 🧠 GenAI Collective 🧠 NYC Demo Night 🚀

New York’s AI community is thriving, and the Collective Community is back for another night of cutting-edge demos, real-time feedback, and quality time with some familiar faces.

On June 12th, 8 startups will take the stage to showcase their product. If you would like to demo and receive feedback from the community, please click the button below and fill out the form! Submissions close the Saturday before the event at 11:59pm.

🍁 Toronto

Tue, May 27th: AI for Bloggers & Content Creators ✍️🚀

Thu, Jun 5th: 🧠 GenAI Collective Toronto | Special Mystery Event ✨

☔️ Seattle

Tue, May 27th: Seattle Demo Day – ROAM

🇨🇦 Vancouver

[LAUNCH! 🚀] Thu, May 29th: GenAI Collective Vancouver Kick-off 🇨🇦

🏛️ Washington D.C.

Fri, Jun 6th: 🧠 GenAI Collective DC | Special Mystery Event ✨

Regulate Later, Win Now? The AI Ethics Gamble on Capitol Hill

Guest post by Liel Zino, Policy Lead @GenAI Collective

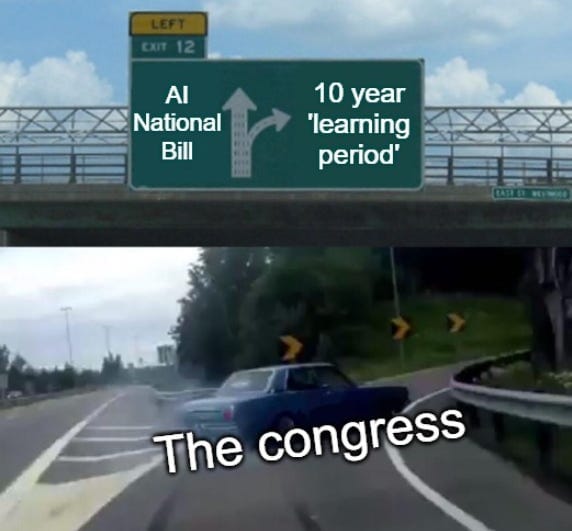

Last week, House Republicans shocked Washington by adding a new clause to the tax bill (H.R. 1), offering to ban states and local authorities from regulating AI for 10 years under what they call a “learning period.” This small but powerful provision, cleverly buried in Section 43201(c) of the House Energy & Commerce Committee’s budget reconciliation, and most recently passed by the House of Representatives, has huge implications.

Any kind of such long-term regulatory freeze would be deeply problematic under normal circumstances, but it’s even more troubling when it comes to such a fast-evolving technology that is already reshaping our lives, workforce, education, and society.

If passed, this law would impose a decade-long freeze on state-level AI regulation. It would also nullify active legislative efforts across the country, including 20+ laws enacted last year in California and new proposals like New York’s AI package and Connecticut’s SB-2. This sweeping preemption comes at a time when AI governance is gaining momentum nationwide. In 2024 alone, nearly 600 draft bills related to AI regulation were introduced across 45 states, and U.S. federal agencies issued 59 AI-related regulations (more than double the number in 2023!).

So why now, and who’s actually gaining from this?

America First, Ethics Second?

House Republicans claim that the new moratorium is designed to ensure AI innovators are free to invest, create, and win the global AI leadership race against China and the rest of the world, a major strategic goal for the U.S. Just as critically, they argue that the 10-year ban will serve as a ‘learning period’, allowing the field to fully unfold and giving the federal government time to develop a cohesive national framework and standards that reflect AI’s true transformative power.

Congress has been notoriously slow in its AI regulation journey, and has so far avoided creating a cohesive national AI bill, unlike its European counterparts. In the absence of congressional action, states stepped in to build their own guardrails and standards, resulting in an almost patchwork-like regulatory across the nation. The federal government’s hesitant and sluggish approach to AI regulation becomes even more deafening when it not only delays its own action but actively seeks to block state-level efforts.

Think tanks and policy organizations such as CAIDP (The Center for AI and Digital Policy) and more have also tried to fill the gap by actively working to build ethical AI frameworks and offering guidance for other stakeholders to use. Some even compared the lack of regulation in AI to the social media oversight a decade ago, an issue that still continues to impact today.

In every way, the biggest winners are undoubtedly the large tech companies. They stand to benefit enormously from the absence of government oversight, operating without constraints from any regulatory body. The prospect of a 10-year ‘free roaming’ period grants these companies unchecked power to shape the future of AI, influence societal norms, and define the landscape of privacy, security, and data governance entirely on their own terms, all while prioritizing their financial, business, and power interests above the public good.

Is This Actually Going To Happen?

Realistically, although it has caused significant panic among many AI ethics and policy professionals, this moratorium has very slim chances of being signed into law. Quite frankly, even if it does, many AI companies would still be subject to regulation under the EU AI Act and other international frameworks.

Still, this moment offers a valuable glimpse into how the current administration and Congress view AI and technology regulation moving forward. As AI continues to evolve rapidly and gain increasingly powerful capabilities, it’s becoming clear that the federal government may struggle to keep pace with adequate legislation to ensure AI is developed and deployed for the public good and by actors operating in good faith. Congress’s inclination to assert its own authority while curbing state-level initiatives, without offering a proactive federal alternative, combined with the rapid advancement of the technology at stake, creates a precarious environment. In this vacuum, bad actors may find ample opportunity to exploit the situation for their own gain.

Final Thoughts: Ten Years, One Blind Spot

In the technology sector, 10 years is not just a decade; it is an entire era. Placing a regulatory freeze on AI for that long at the national level is a massive risk, especially given how rapidly the technology is evolving and the presence of bad-faith actors in the ecosystem.

Moreover, the already fragile balance between regulation and enabling innovation is thinner than ever. In the case of AI, it brings a new dimension that we can no longer ignore: public trust. In the United States, public trust in AI stands at just 32%, compared to 72% in China, according to the Edelman Trust Institute. In fact, public trust in AI is higher not just in China, but across much of the developing world compared to the United States.

Congress must recognize that to truly lead in AI, it must craft cohesive yet enabling regulation with public trust at the center. Winning the global AI race will ultimately depend on how people perceive and trust this technology, and that trust is closely tied to how they perceive their government’s ability to responsibly regulate it. Otherwise, we may win the innovation race abroad but lose something far more critical at home.

Event Spotlight

🌁 SF Bay Area: Frontier Founders AI Dinner

The Collective hosted an intimate dinner for founders at the edge of AI innovation, creating space for sharp minds to connect over shared challenges, full plates, and honest conversation. With guests flying in from across the globe, the table brought together builders navigating fundraising, go-to-market strategy, team building, and product storytelling.

Held in a private dining room tucked away in Potrero Hill, the evening delivered more than great food. It offered a rare chance for early-stage founders to trade real insights in a setting designed for connection and trust. Backed by Fidelity Private Shares, this was a night that reminded everyone at the table that while the work is hard, no one has to do it alone.

Join the Community!

💬 Slack: GenAI Collective

𝕏 Twitter / X: @GenAICollective

🧑💼 LinkedIn: The GenAI Collective

📸 Instagram: @GenAICollective

We are a volunteer, non-profit organization. All proceeds solely fund future efforts for the benefit of this incredible community!

Our Premier Partners

Premier Partners are values-aligned leaders who invest in the future of AI by supporting the world’s most vibrant grassroots community. We thank them immensely for their ongoing support! 😄

About Liel Zino

Liel Zino is a tech policy professional with a background in digital transformation, AI governance, and public innovation. She has worked across government and civil society both nationally and internationally to advance responsible AI adoption and inclusive digital policy. Liel also serves as Policy Lead at the GenAI Collective.

About Noah Frank

Noah is the co-founder of Aurix and has spent his career both working at startups and advising global leaders on innovation strategy. His work and body of research focus on AI policy, anticipatory governance, and effective decision-making. When not working to make emerging tech work for all, you can find him making music with his band. 🎸

About Eric Fett

Eric leads the development of the newsletter and online presence. He is currently an investor at NGP Capital where he focuses on Series A/B investments across enterprise AI, cybersecurity, and industrial technology. He’s passionate about working with early-stage visionaries on their quest to create a better future. When not working, you can find him on a soccer field or at a sushi bar! 🍣