In this week’s edition of The Byte, Liel Zino, Policy Director at the AI Collective Institute, explores the role of trust as a pillar of AI governance. Examining data from nearly 47 countries, our research reveals the landscape of trust in AI globally and offers concrete recommendations on what we can do about it.

View the interactive dashboard on the AI Collective Institute’s site ⬇️

Can We Regulate Trust? Building a Human Foundation for the AI Era

Trust is not a soft or secondary issue. It is the foundation that allows societies to embrace new technologies. Without it, even the most advanced systems fail to take hold. With it, innovations become integrated into daily routines and institutions. Trust is what enables AI to move from experimental tools to our complex multi-layered society.

Artificial intelligence has shifted from a futuristic promise to an ongoing force that is aiming to shape our daily lives. From the recommendation engines that guide our choices to the algorithms influencing hiring, healthcare, and law enforcement, AI is no longer confined to research labs.

Surveys consistently show that public confidence in AI lags behind trust in the broader technology sector and continues to decline year after year.

In the United States, the KPMG 2025 Global Trust in AI Survey reported a drop in public trust from 61% to 53% over the past five years, one of the lowest levels among developed economies.

Why trust in AI matters

Public trust in AI is not only a moral concern but also a practical necessity. AI systems rely heavily on human interaction to function, learn, and improve. Without reaching a significant majority of public confidence and engagement, these systems cannot reach their full potential, slowing innovation that is critical to economic growth, scientific advancement, and global competitiveness. Even more importantly so, if technological progress outpaces public trust, AI risks becoming a tool used among the privileged few, deepening inequality, widening gaps in resources and opportunities, and creating new and much higher barriers to success. Rather than expanding possibilities, AI could narrow them, leaving most people with fewer options than before.

These reasons and many more are why many governments have turned to regulation. The logic is straightforward: rules reassure people, guardrails signal responsibility, and regulation should, in theory, increase trust. But does it actually work that way?

Can we really regulate trust?

This question was at the heart of our recent report, Can We Regulate Trust? -a global analysis of the relationship between national AI regulation and public trust. The study analysed 47 countries, examining whether the presence of national AI regulation correlates with higher levels of public trust. The findings were surprising yet clear: there is no significant relationship between regulation and trust. Countries with strong national regulation frameworks in place were not showing higher levels of public trust in AI compared to those without them.

Instead, the strongest predictor of trust was daily use of AI. In countries where citizens use AI on a daily basis, levels of trust were higher.

Familiarity and lived experience mattered more than top-down rules and made people fear less and trust more.

This suggests we may be focusing on the wrong issue. Regulation is still vital for protecting rights, ensuring accountability, and managing risks, but it does not increase trust. A national AI regulatory framework, while valuable, is not a finish line or a guarantee of success but rather one stepout of many, and is insufficient to make the public feel safe and able to trust this new transformative technology. It sets the guardrails, but it does not inspire confidence.

Building human infrastructures

If regulation cannot build trust on its own, then the question becomes: what can?

If we want AI to serve society broadly and equitably, we must elevate public trust to the center of the policy conversation. That means going beyond regulation alone and developing a more comprehensive approach that includes 3 main areas:

AI literacy: Too often, public debate frames AI as a looming, threatening force that will take away jobs, freedoms, or even human agency. We must change that narrative by equipping citizens with real, practical knowledge: how AI works in broad terms, what its limitations are, how they should engage with it and what they can genuinely gain from interacting with it. Literacy programs should extend across schools, workplaces, and communities. Just as digital literacy became essential during the internet era, AI literacy must become the civic infrastructure of the AI age.

Responsible public sector adoption: Governments should lead by example and implement AI in public services with transparency. When citizens experience AI deployed responsibly and can experience first hand how it changed their bureaucratic experience, they receive tangible proof that AI can benefit their daily struggles and align with societal values. These encounters can act as trust signals.

Collaboration across sectors: Governments cannot do this alone. Industry, academia, and civil society must share responsibility for building trust. Public–private partnerships must be furthered to ensure this technology is being led in the right way, without risking people’s rights or well-being.

Rethinking action

The lesson from this research is clear. Regulation is essential, but it is not sufficient. To truly govern AI responsibly, we must build human infrastructures alongside regulatory ones. That means investing heavily in AI literacy, designing public-facing systems that model transparency, and creating collaborative structures that put people at the Centre.

The future of AI will not be determined only in legislative chambers or corporate boardrooms. It will be decided in classrooms, hospitals, workplaces, and homes, places where people encounter AI and decide whether to accept it. That is why policymakers and technologists must lead with a human approach.

Trust cannot be legislated into existence. It must be earned through experience, education, and inclusion. Without it, regulation will remain a paper shield at best. With it, AI can become not just a transformative technology, but a trusted partner in building a more inclusive and human-centered future.

Ultimately, increasing public trust needs to be a central priority for governments and policymakers. Governments that fail to act decisively today risk being sidelined in the AI-driven world of tomorrow.

To access the full report, use the link below.

Thanks for reading The Byte!

The Byte is The AI Collective’s insight series highlighting non-obvious AI trends and the people uncovering them, curated by Noah Frank and Josh Evans. Questions or pitches: [email protected].

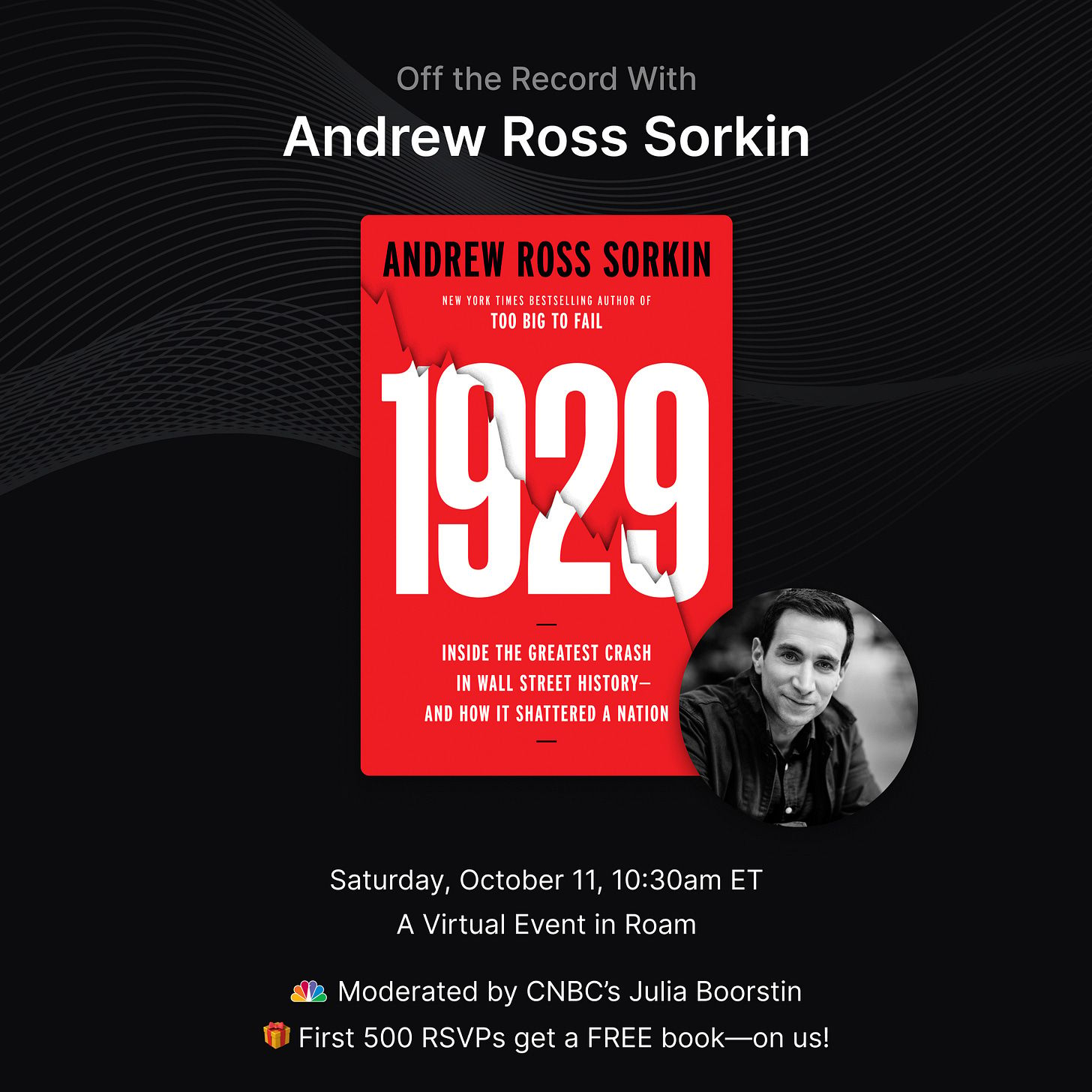

Premium Partner Spotlight: Roam

Step into an evening with Andrew Ross Sorkin - one of the most influential voices in modern finance. The award-winning journalist, bestselling author of Too Big to Fail, and co-creator of Showtime’s Billions will bring his unmatched storytelling to life as he unpacks 1929, his spellbinding new account of the most infamous stock market crash in history. This exclusive event promises rare insight from a master narrator whose work has shaped how the world understands power, money, and crisis.

Are you subscribed across our platforms? Stay close to the community:

💬 Slack: AI Collective

𝕏 Twitter / X: @_AI_Collective

🧑💼 LinkedIn: The AI Collective

📸 Instagram: @_AI_Collective

The AI Collective is driven by our team of passionate volunteers. All proceeds fund future programs for the benefit of our members.

About Liel Zino

Liel Zino is Policy Director at the AI Collective Institute, where she leads research on global trust in AI governance and the role of human-centered approaches in shaping policy.