It's Friday, April 3rd: Two years and $435 billion later, the companies selling AI infrastructure still capture nearly 80% of the profits while app makers fight over single-digit margins. A new framework argues that 75% of knowledge work is maintenance overhead waiting to be automated. This week, we dig into both.

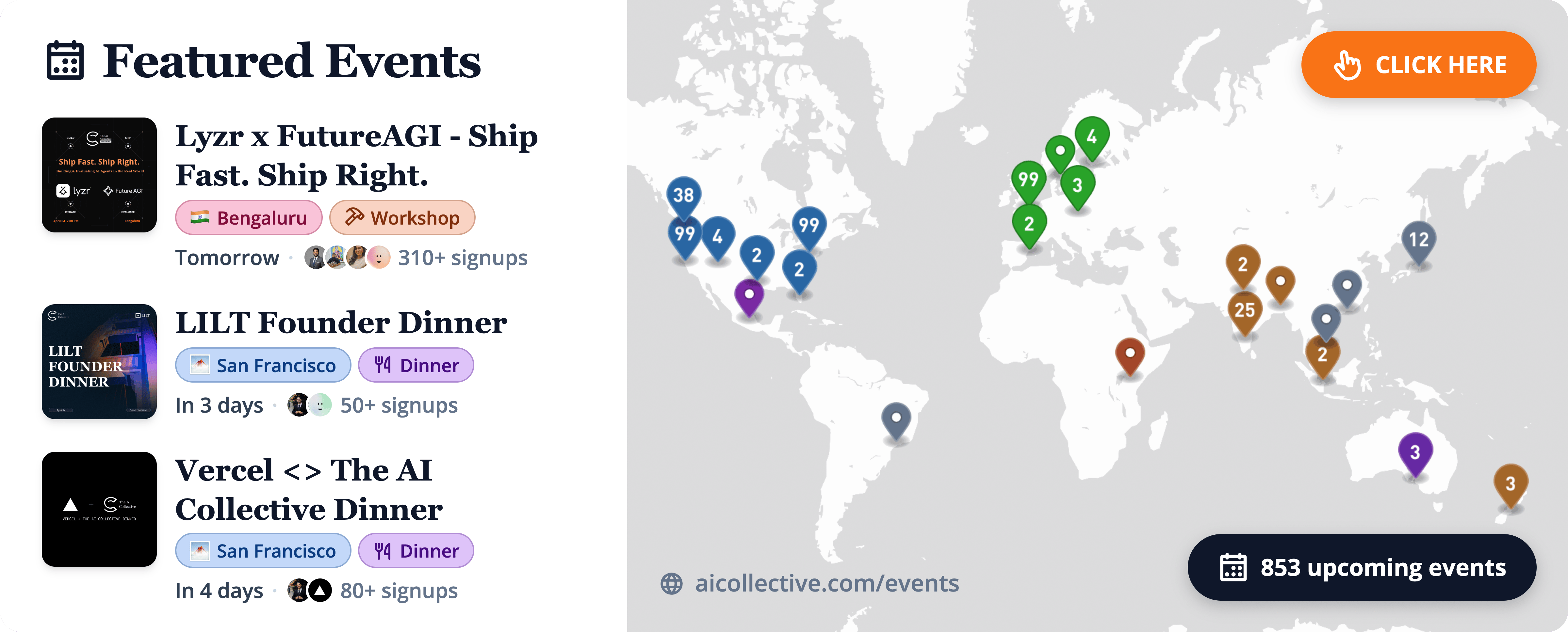

Head over to our Events Portal to get the latest on upcoming AI Collective events near you. Search by city, date, or event format, and join thousands of builders at events across 100+ chapters on every continent (except Antarctica, for now).

Find an event in your city using the link below.👇

In this section, we’ll introduce you to Khushpreet Kaur, our Collective Member of the Week. Look out for a new spotlight from a member of our community each week.

Q: What has your AI journey looked like so far?

Unlike a lot of builders, my interest in AI started with a paper called AI Newton, which explored fundamental laws of physics through simulations being run without external principles. The sheer idea of rediscovering laws with such power was fascinating.

As a physics graduate, what drove me to AI was the practical replication of human intelligence through matrices, numbers, and gradients. AIC was a space where I could explore more of this depth with people who had the actual know-how.

Q: What has been your favorite experience at AIC?

My favourite event till date is the healthcare buildathon we recently did. We brought together technical experts, clinicians, and investors all in one room.

What came out were real ideas, with investors judging and ready to invest, and clinicians collaborating with tech experts to assess feasibility. This felt like the peak of community building to me.

Q: What do you want the world to know about your work?

I am hosting conversations around gender, AI, and agency. I want to expand and explore more intersections in the AI space, as I see it largely being occupied by men. I want to make it a more intersectional space.

At AIC Delhi chapter, we built the community from the ground up. I think my biggest fear was how, but with my teammates we just executed. We listened to people, and the experiences we curated kept becoming more and more aligned. We were able to engage people meaningfully.

For other chapters, I think the biggest takeaway is to focus on listening and iteration. If you stay close to your community and build with them, the direction becomes much clearer.

Here, we feature a few standout stories from creators in our network.

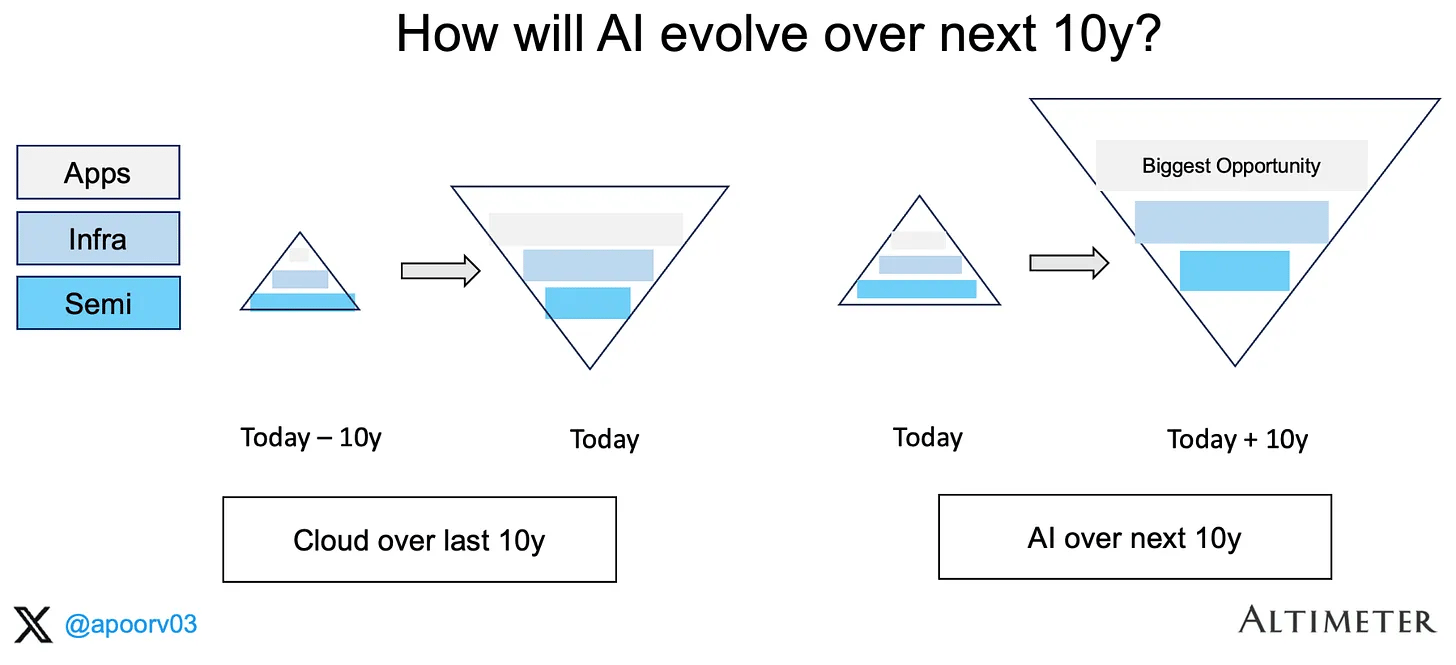

💰 The AI Gold Rush is Two Years Old… But the Shovel Sellers Are Still Winning

Apoorv Agrawal revisited his 2024 analysis of the generative AI value chain and found that the stack looks almost exactly the same. The ecosystem grew 5x to roughly $435 billion in annual revenue, but semiconductors still capture 79% of gross profits. Application-layer companies, including OpenAI and Anthropic, operate at roughly 33% gross margins. The inversion everyone predicted has barely budged.

The numbers are stark. NVIDIA's data center business now annualizes to roughly $250 billion, with Broadcom adding $34 billion through custom accelerators. Together, the semiconductor layer accounts for about $300 billion of the $435 billion total. Infrastructure players like Azure, AWS, and GCP split roughly $75 billion. The entire application layer, the part most people actually interact with, sits at around $60 billion, with OpenAI and Anthropic making up three-quarters of that.

Hyperscalers spent $443 billion on capex in 2025, up 73% from the year before, with another $450 billion projected for 2026. Their CEOs say they're monetizing capacity as fast as they add it, but the evidence stays vague. Agrawal notes that at the current trajectory, the app layer would need over a decade to reach profit share comparable to earlier technology platforms.

Custom silicon is the thing to be watchful of. Amazon already has 1.4 million Trainium2 chips deployed and its custom chip business hit a $10 billion annual run rate growing triple digits. Google's TPUs are mature. OpenAI has an ASIC partnership with Broadcom. If any of these programs reach competitive scale, NVIDIA's margins compress and the whole stack reprices. History says most ASIC programs fail, but the incentives have never been this large. For builders, the best AI business today is still selling the infrastructure, and the application layer still needs to prove its unit economics can hold up.

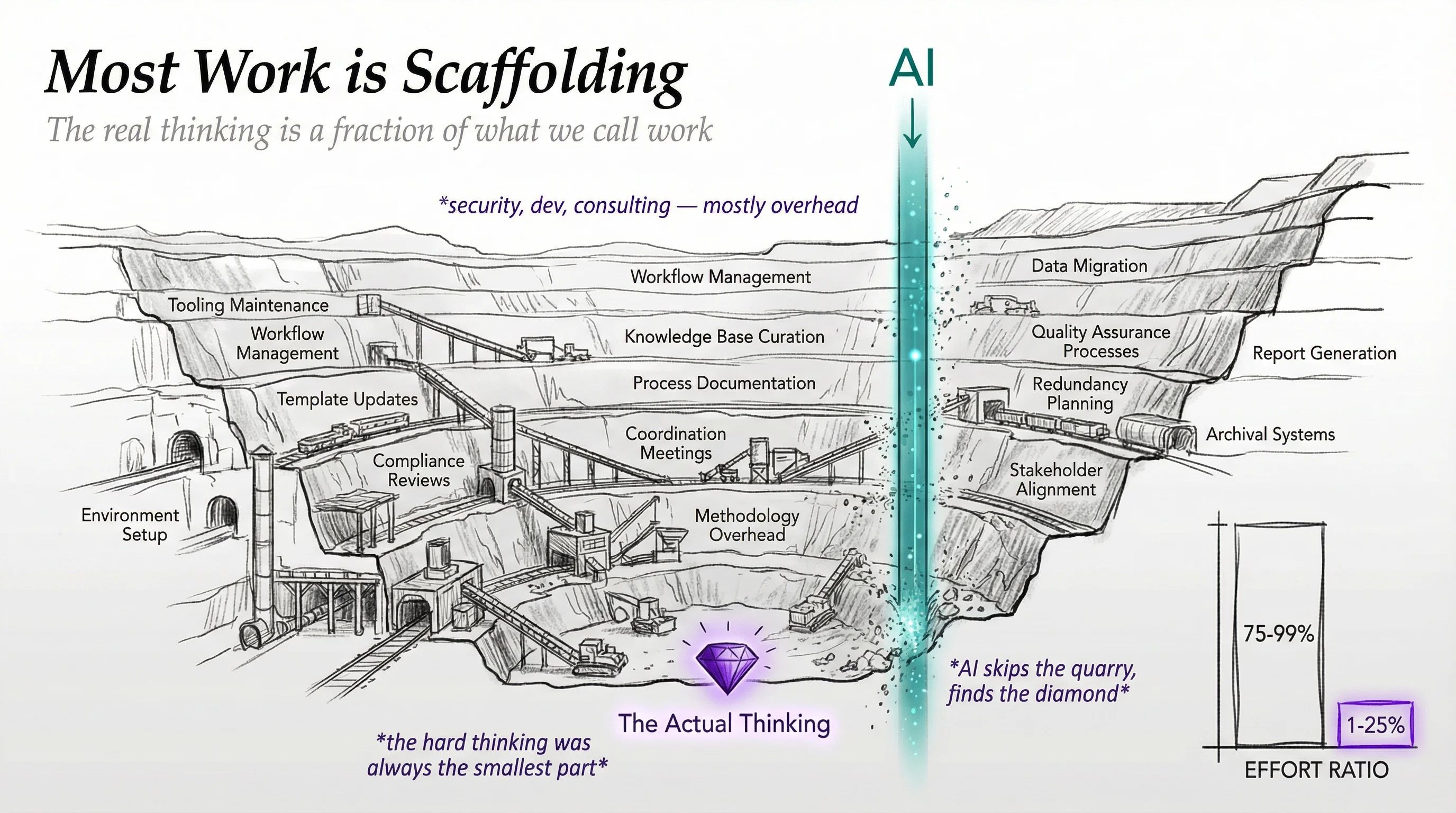

🧠 Five Ideas That Explain Where AI Work Is Actually Headed

Daniel Miessler published a framework this week for understanding the most important shifts happening in AI right now. His argument comes down to one thing: the hardest part of using AI well is knowing exactly what you want. The people and organizations that can articulate clear, testable goals will pull away from everyone else. Fast.

Miessler lays out five core ideas. First, organizations will adopt self-improving loops where AI agents autonomously troubleshoot problems, test fixes, and update their own procedures. Second, the critical skill becomes what he calls "intent-based engineering," defining ideal outcomes as short, binary pass/fail statements. Most teams can't do this well yet, and that gap is already separating early adopters from everyone else.

Third, AI makes everything visible. Costs, quality, process duration, labor distribution. He describes it as going from magic to a spreadsheet. The hiding places disappear. Fourth, he estimates that 75 to 99% of knowledge work is scaffolding, the maintenance overhead around the actual thinking. Agent-based tools automate that scaffolding, leaving only the high-value problem solving. Fifth, once expert knowledge enters documentation, code, or training data, it becomes permanent collective infrastructure. Twenty years of accumulated expertise becomes replicable overnight.

The connecting thread across all five ideas is a universal improvement cycle: map goals, execute with agents, log everything, collect failures, improve autonomously, update procedures, repeat. Miessler's point is that the compounding effect makes early adopters nearly impossible to catch. For builders and operators reading this, the question to ask yourself is can your team write down, in 8 to 12 words, what "good" looks like for each process you run?

If you can, you're ready for this shift. If you can't, that's the first thing to fix!

Each week, we highlight AI Collective chapters doing groundbreaking work with their members around the world. Tag us on socials to be featured!

🍑 ATL | Claude Cowork Community Kickoff

Image from Anika Fisher

Atlanta's AI Collective chapter launched its Claude Cowork community with a kickoff event organized by Tyler Sztuka. The session focused on moving past the experimentation phase and into real execution with AI tools. Attendees got hands-on with multi-step workflows and autonomous task execution, with the conversation centering on the gap between treating AI as a typing assistant and actually building with it.

Anika Fisher, who attended the kickoff, noted that the community plans to run workshops, hackathons, live demos, and nonprofit-focused impact labs. The early energy is strong. If you're in the Atlanta area and want to get involved with applied AI work, this chapter is just getting started.

🌁 SF | How AI Is Reshaping the Management Role

Image from Adelina M

RoryPlans and The AI Collective co-hosted a panel on how AI is reshaping management, featuring speakers Willow Marcon and Hussein Mehanna with Ash Kumra moderating. The conversation stayed practical, focused on how managers can use AI to support better decisions rather than replacing judgment with automation.

Adelina Martiniuc from RoryPlans shared that the audience was highly engaged, with questions grounded in real operational challenges. One attendee summed it up well: sessions like this help managers understand AI as a tool to sharpen decisions, not a buzzword to chase. If you're a manager trying to figure out where AI fits into your workflow, RoryPlans published a deeper writeup on their Substack.

🗒️ Community Notes

⏳ HumanX: Next Week

We’re unveiling our HumanX programming one day at a time — and Day 3 is the one most people came for. Every session is open to the global AIC community, and you don’t have to be in San Francisco to join.

Register at the links below on each session to tune in from anywhere in the world. If we’ll see you at the conference, same links apply — grab your spot.

Thursday, April 9

10:00 – 10:45 AM PT Human in the Loop: What Stays Human When AI Does Everything Else? Wolf Ruzicka (Unlimit), Tony Loehr (Cline), Tyrone Ross (Cisco), Vivek Ravisankar (HackerRank) 📍 Living Room · Discussion

11:00 – 11:45 AM PT What Can You Do TODAY to Future-Proof Yourself? Wallis Mills (Modern Enterprise), Maju Kuruvilla (Spangle AI), Lloyd Spencer (Workforce Mentor) 📍 Living Room · Discussion

1:00 – 1:45 PM PT Will You Feel the AGI? Market Reactions and the Moment Everything Changes Henry Zhang (Hermitage Capital), Anubhav Maheshwari (Nebius)📍 Living Room · Discussion

2:00 – 2:45 PM PT Closing the Loop: What Happens When We Hit AGI? Community Forum📍 Living Room · Discussion

3:00 – 3:45 PM PT AI 2027: Hot Takes Only Clarey Zhu (Headline), Sanchit Garg (Zime.ai), Nikhil Gupta (Vapi)📍 Living Room · Discussion

4:00 – 4:45 PM PT The Long View: What Are We Actually Building? Ewa Lewandowska, Philip Rathle (Neo4j), Adelina Martiniuc (RoryPlans), Florent De Goriainoff (Fluents)📍 Living Room · Discussion

All times Pacific. Register at your session link — we’ll see you there, wherever “there” is for you.

🗣️ The Multilingual Gap: Why LLMs Struggle Beyond English and How to Fix It

While Large Language Models continue to redefine digital interaction, their performance consistently degrades when transitioning from English to other languages. This performance gap is often oversimplified as a mere lack of training data, but recent research reveals a more complex reality. By analyzing multi-turn conversational agents through the MultiChallenge benchmark, we can identify three primary drivers of failure. These include data artifacts such as cultural mismatches, inherent linguistic nuances like pronoun-dropping, and fundamental model limitations including tokenizer inefficiencies and English-centric reasoning.

The data shows that after correcting for artifacts, model-specific limitations account for up to 80% of performance drops. Because retraining foundational models is prohibitively expensive, the best path forward lies in targeted post-training. By utilizing high-quality, language-specific datasets and refined reinforcement learning environments, organizations can bridge the reasoning gap and build truly global AI that maintains accuracy and cultural relevance across every language.

Read more and learn how LILT can help bridge your multilingual performance gap.

🤝 Thanks to Our Premier Partner: Roam

Roam is the virtual workspace our team relies on to stay connected across time zones. It makes collaboration feel natural with shared spaces, private rooms, and built-in AI tools.

Roam’s focus on human-centered collaboration is why they’re our Premier Partner, supporting our mission to connect the builders and leaders shaping the future of AI.

Experience Roam yourself for your whole team!

🫵 Do You Belong on Our Newsletter?

Share your message with the world’s largest AI community. To inquire about partnership availability, reach out to our team below.

The AI Collective is a community of volunteers, made for volunteers. All proceeds directly fund future initiatives that benefit this community.

Before You Go…

💬 Join Slack: AI Collective

🧑💼 LinkedIn: The AI Collective

📸 Instagram: The AI Collective

𝕏 Twitter / X: @_AI_Collective

Get Involved in Your Community

Thank you to the thousands of volunteers around the world who make this work possible. We truly could not do this without you.

About Noah Frank

Noah is a researcher, innovation strategist, and ex-founder thinking and writing about the future of AI. His work and body of research explores the economics of emerging technology and organizational strategy.

About Joy Dong

Joy is a news editor, writer, and entrepreneur at the forefront of the emerging tech landscape. A former educator turned media strategist, she currently writes TEA, where she demystifies complex systems to make AI and blockchain accessible for all.