Upcoming Events

🌁 SF Bay Area

🗓️ Hungry for even more AI events? Check out SF IRL, MLOps SF, or Cerebral Valley’s spreadsheet!

🗽 New York

Thu, April 3rd: GenAI Collective @ Deep Tech Week NYC: Software’s Agentic Future

Thu, April 10th: GenAI Collective - NYC Demo Night

❗️The New York team is growing! We are seeking passionate volunteers to join us supporting event planning, sponsorships, and marketing. Apply to Join the Team!

🏛️ DC

Thu, Apr 3rd: AI Power Hour: GenAI Collective x Prefect

Wed, Apr 16th: AI Insiders Roundtable

🇨🇦 Toronto

🇵🇱 Warsaw

Tue, Apr 8th: 🧠 GenAI Collective Poland 🧠 Kickoff Event (Warsaw)

AI Regulation in 2025: Global Unity or Deepening Divide?

Guest Article by Jasmine Hasmatali

It’s 2025. Is Global Consensus on AI Regulation Still Possible?

You can’t talk about AI without also talking about its broader impact on society… at least you shouldn’t. As AI continues to permeate into every aspect of our lives—from healthcare and education to national security and democratic governance— regulatory measures are essential to ensure its development remains transparent, safe, and aligned with societal values. By allowing AI development to proceed without any oversight, we risk enabling the greatest tech transformation in human history to be exploited for harm–accelerating misformation, intensifying surveillance, and exacerbating job displacement without time to reskill.

Generally speaking, regulation aims to promote broad principles of safety, fairness, transparency, privacy and accountability throughout the entire AI lifecycle. While regulation often triggers resistance in tech circles for limiting innovation, regulation presents a powerful opportunity for global collaboration, accelerating the widespread development and adoption of safe, effective AI systems.

The 2023 Bletchley Declaration marked a pivotal moment for the international AI landscape. It signaled the collective willingness of the international community to collaborate on AI, framing regulation not as a constraint, but as a necessary foundation for inclusive economic growth, sustainable development, and responsible innovation. This ‘world first’ approach created a hopeful vision for global consensus on regulation, which many have been expecting to build on.

But, as February’s Paris AI Action Summit demonstrated, global alignment on AI’s broader goals and regulatory approaches remains an elusive goal. Critics like Oxford’s John Tasioulas described it as a "missed opportunity," while others were less diplomatic, calling the summit an outright failure. Amidst intensifying global competition and collaboration for AI dominance, a critical question emerges: What direction will AI regulation ultimately take? Will cooperation prevail, or will an unchecked race for AI supremacy overshadow responsible innovation?

Now more than ever, international cooperation on AI is not an ideal, it’s crucial for ensuring the AI-driven future we all envision!

The Great Divide

60 countries, including China, France, India, Japan and Canada, signed a declaration at the Paris summit on ‘inclusive and sustainable’ artificial intelligence, notably absent on this list was the UK and US.

The reason for these absences? Well, several, but mainly the prevailing view that regulation stifles innovation. US Vice President J.D Vance said that the international regulatory regimes must “foster the creation of AI technology rather than strangle it”, explicitly challenging Europe's historical approach to technology regulation. Meanwhile, the UK raised concerns that the declaration failed to adequately address global governance and national security.

Despite G7 leaders reaffirming their commitment to inclusive AI governance, business-friendly and nationally-driven approaches continue to drive policy agendas, as evidenced by the Trump Administration’s Executive Order ‘Removing Barriers to American Leadership in Artificial Intelligence’ and the UK’s ‘A pro-innovation approach to AI regulation’.

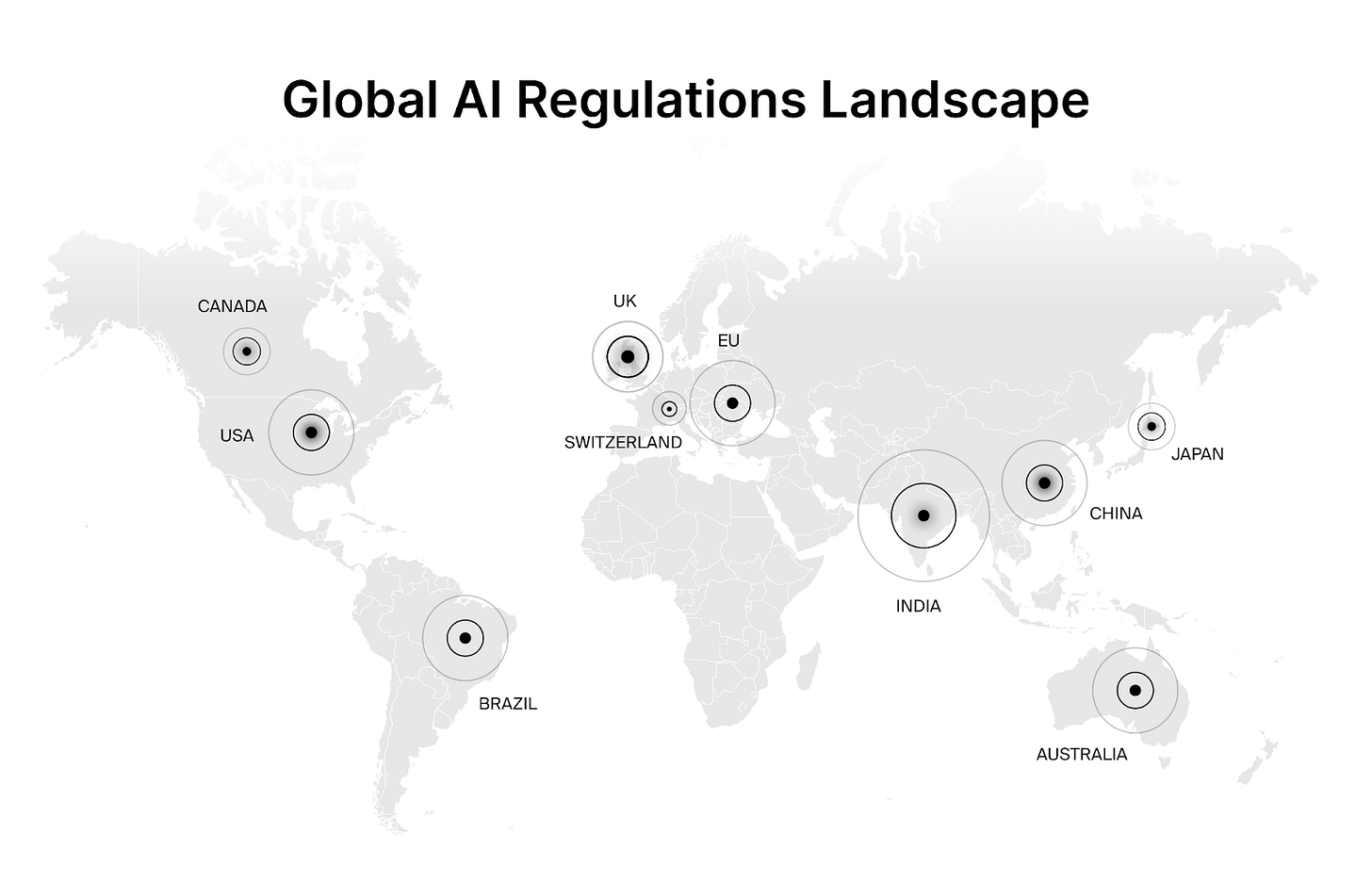

The above graphic shows the leading jurisdictions in AI Governance. I suggest viewing the attached article which outlines key regulatory publications, acts, and proposals from each country, providing a comprehensive overview of the current AI regulatory map.

A Balancing Act: Innovation vs. Regulation

The frequent framing of regulation and innovation as mutually exclusive is compromising the possibility of global cooperation. While approaches to national regulation may vary on the grounds of economic priorities, political ideologies, and the levels of technological development - AI is borderless by nature. The rippling impacts of AI, both good and bad, will be felt globally, hence the need for global cooperation is paramount.

Instead, the community should focus on how international collaboration will help prevent regulatory gaps, facilitate seamless collaboration through standardization, and push forward a shared agenda of democratic values. Without targeted global regulations, we risk entrusting AI’s future entirely to private entities like Anthropic, OpenAI, Google, and Meta, granting them unilateral power to define safety and trustworthiness in AI systems.

The UK and US's reluctance to sign the Paris declaration, combined with the EU and France signaling their intentions to ease regulatory pressures, complicates the ultimate goal of shared governance. Achieving unified global standards seems increasingly challenging, but no less crucial. Without consensus, can we realistically build safe, ethical, and inclusive AI?

Regulation and Beyond - A Collective Responsibility

Regulation alone won’t ensure safe and ethical AI, so we can’t get hung up on expecting one global top-down effort. Instead, we need to push for regional standards, codes of practice, research and monitoring and evaluation. Start with a smaller group, prove alignment and value, and then continue to increase the number of collaborators.

When top-down governance falters, alternative approaches become essential. If you're reading this article, consider what role you might play—through your organization, community, or expertise—to foster the safe, inclusive, and ethical AI future that we all deserve. Whether through advocacy, education, or practical engagement, everyone has a stake in shaping this critical junction in human history.

The question in 2025 isn’t whether global AI regulation is achievable. It's whether we have the collective fight to roll up our sleeves and make it happen.

Events Spotlight

🌁 SF Demo Night 🚀

Last week, 300+ AI pioneers packed the AWS GenAI Loft to witness the next wave of AI innovation with us and Product Hunt!

100+ startups applied for a chance to ENTER THE ARENA 🏟️ and the finalists did not disappoint! 🤩

🔥 FEATURED STARTUPS 🔥

🗣️ Wispr Flow – Hyper-accurate voice transcription (Sahaj)

🧠 Entelligence.AI – Engineering team ops assistant (Aiswarya)

💥 The energy was electric. The crowd was engaged. And after 10,000+ virtual claps and hundreds of real-time votes, the audience crowned their winners:

🏆 Best Overall: Wispr Flow

🎨 Most Creative: AthenaHQ

Huge shoutout to our key sponsor Ampersand for fueling the next wave of AI builders!

This is quickly becoming a global network of events that elevates the entire tech ecosystem worldwide. We intend for this to become the most impactful event series in tech history. THANK YOU ALL for being a part of it!! 🚀

Join the Community!

💬 Slack: GenAI Collective

𝕏 Twitter / X: @GenAICollective

🧑💼 LinkedIn: The GenAI Collective

📸 Instagram: @GenAICollective

We are a volunteer, non-profit organization – all proceeds solely fund future efforts for the benefit of this incredible community!

Join the GenAI Creative Studio!

The GenAI Collective is on the lookout for creative pros who are passionate about AI and storytelling. We need sharp, innovative minds to help shape our brand across social media, newsletters, PR, podcasts, and beyond. If you're ready to craft compelling content at the intersection of tech and creativity, let’s talk.

We are currently looking for:

Creative Director

Graphic Designers

Videographers/Editors

Photographers

Producers

Animators

Marketing Copywriters

While these roles are volunteer-based, the perks are big. You'll get exclusive access to all GenAI Collective events, connect with a cutting-edge AI community, and collaborate with a fast-growing team. Plus, every project you contribute to comes with a shout-out to our highly engaged audience of AI pros and industry leaders—giving your work the visibility it deserves.

If you're a creative professional eager to join a team of AI experts dedicated to building global AI communities and ready to have fun, contact us. This is an amazing opportunity.

About Jasmine Hasmatali

Jasmine Hasmatali is a previous founder, recent Master's graduate and Communications Director at Aurix. She holds a Masters of Law in Human Rights from the University of Edinburgh and is passionate about using her legal background to advocate for inclusive AI futures which benefit everyone. Her research is oriented towards bridging the gap between rights, technology and society. When not working you can find her with friends, at the gym, or travelling if she’s lucky.

About Eric Fett

Eric leads the development of the newsletter and online presence. He is currently an investor at NGP Capital where he focuses on Series A/B investments across enterprise AI, cybersecurity, and industrial technology. He’s passionate about working with early-stage visionaries on their quest to create a better future. When not working, you can find him on a soccer field or at a sushi bar! 🍣